It’s hard to know where to begin unpacking that vision. If jobs as we know them disappear, where will value be created? Who will capture it? And what exactly will people do with their time and lives? Who will buy products after all jobs are automated? With their universal basic income? (UBI) As you suggest, UBI is politically toxic in much of the current U.S. landscape.

Without structural redistribution mechanisms, value will be concentrated in the hands of a few, not shared as part of an AI-powered societal abundance.

Consider the irony: the financial services firm you are advising may well employ individuals who earn below a living wage—people who must rely on taxpayer-funded assistance to survive.

Here is a Claude researched document on the details-

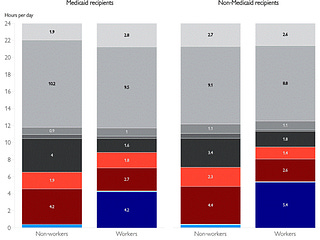

Financial services workers rely heavily on taxpayer-funded assistance despite industry’s record profits:

The founders offered an analogy to the decline of agricultural work—how people once couldn’t imagine life beyond the farm. But this historical comparison glosses over key differences. That transformation unfolded over generations, not years, and was accompanied by new industries and sustained public investment. Today, the speed and scale of AI-driven disruption is unprecedented.

They downplayed the immediacy of job displacement, suggesting it’s a distant concern. But consider what’s happening now: millions of driving jobs are already under threat from the likes of Waymo and Uber. Entry-level roles across industries are quietly disappearing through automation. Add to that a volatile political climate and a growing economic divide.

This isn’t a distant hypothetical. It’s already unfolding.

Perhaps I might say we are already screwed even if we do stay in the agentic loop.