The app for independent voices

SkillRL and RLMs

1. Recursive Evolution vs. Recursive Abstraction

Interesting paper. github.com/aiming-lab/S…

While both use recursion, the target and timing of that recursion differ fundamentally:

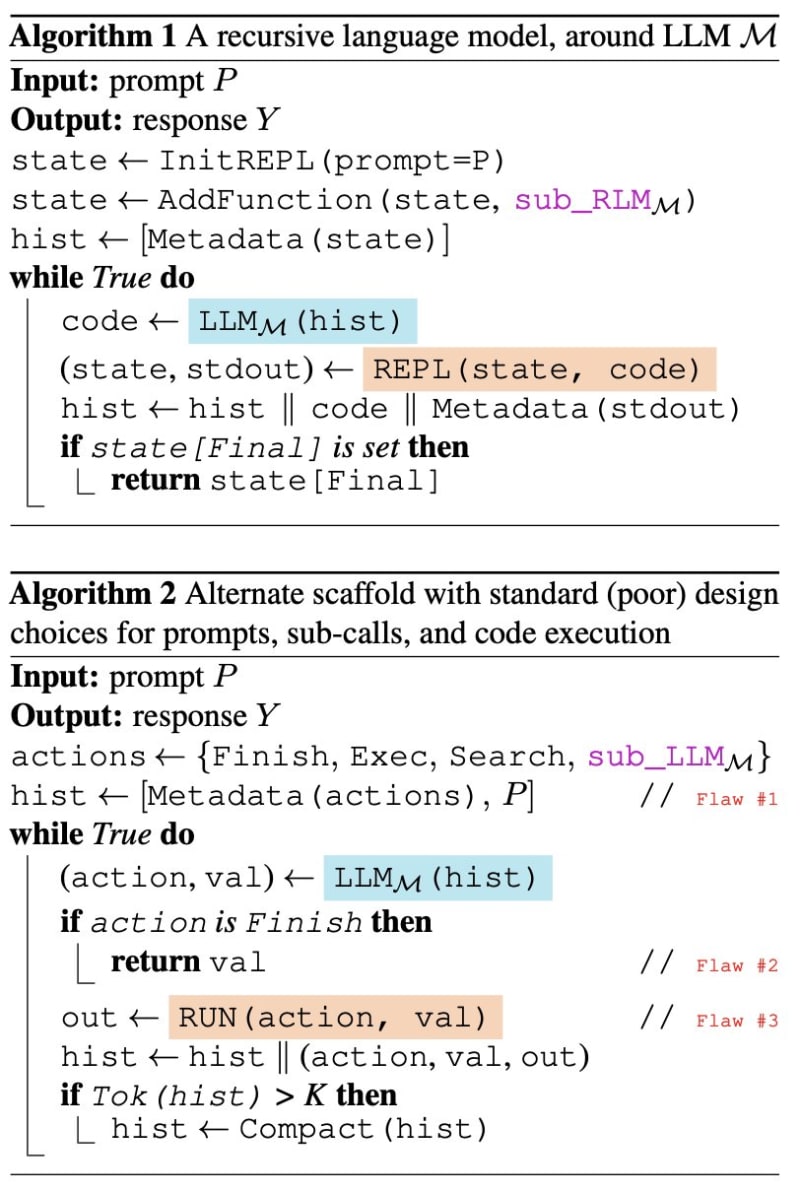

RLMs (Recursive Abstraction): These recurse over information chunks at inference time. By treating a massive prompt as an external variable in a REPL, the model uses procedural abstraction (Python-like loops) to "reason its way" through 10M+ tokens without context rot. Prime Intellect doing interesting things: primeintellect.ai/blog/…

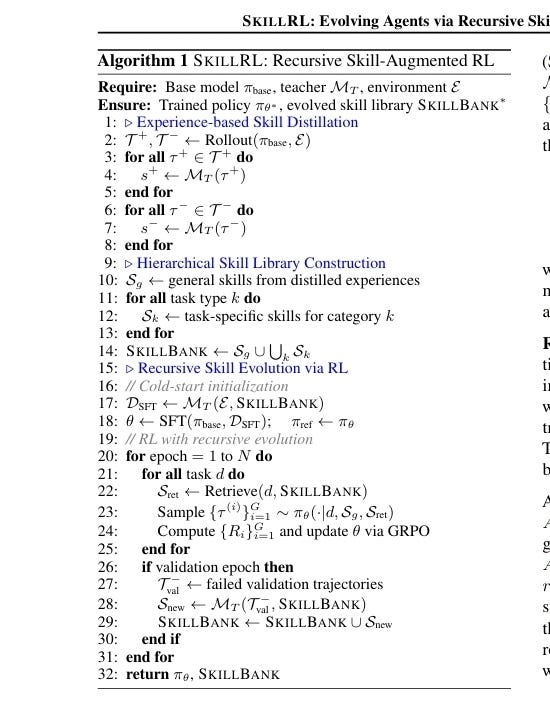

SkillRL (Recursive Evolution): This recurses over skills during the learning phase. It uses a "Recursive Skill Evolution" mechanism that analyzes failures after validation epochs to iteratively refine the agent's policy and SKILLBANK.

2. Efficiency Gains and the Abstraction Principle

Both techniques adhere to the principle that Efficiency is proportional to Abstraction:

RLM Efficiency: RLMs reduce costs by ensuring the "brain" (root LLM) only sees metadata and small chunks, keeping the massive input external. This achieves 2× better accuracy on million-token tasks at comparable or lower costs.

SkillRL Efficiency: SkillRL achieves a 10–20× token footprint reduction. It distills verbose, noisy trajectories into high-density strategic principles, which allows the agent to fit complex "how-to" knowledge into limited context windows.

3. Strategic Implication: The Dual-Stack Design

Mapping these technologies to the two fundamental problems of agentic AI:

The Retrieval Decision Problem (RLM): RLMs solve this by moving from "probabilistic recall" (deciding to search) to "deterministic procedure" (variables are in scope).

The Experience Transfer Problem (SkillRL): SkillRL solves this by distilling raw episodes into a hierarchical SKILLBANK, enabling the agent to transfer knowledge from past successes and failures to new tasks.

4. Dual-Stack Components

The mapping of these techniques into two specialized "Stacks" aligns with the Cognitive Blueprint:

The Memory Stack: Consists of Engram (Layer 4 static weights), Passive Context (Layer 2

agents.md), and RLM (Layer 6 inference scaffold). This stack handles information structure.The Skill Stack: Consists of SkillBank and Recursive Evolution. This stack handles behavioral adaptation, primarily impacting Layers 4 (Procedural) and 7 (Cognitive Management).

🌐 Big Picture: Reasoning + Learning

SkillRL adds a tangent to the missing piece for agent evolution. While RLMs provide the navigation logic for deep exploration of massive data (long-horizon reasoning), SkillRL provides the muscle memory and adaptation (long-horizon learning). Together, they allow an agent to arrive at a task with a refined library of expertise (SkillRL) and the procedural tools to execute it perfectly (RLM).