The app for independent voices

What happened when I gave 10 Claude Opus AI agents a tricky puzzle?

The riddle (which I also posted recently) went like this:

I generated the following two numbers with AI-written code. Can you work out what number comes next in the sequence?

770487, 216739

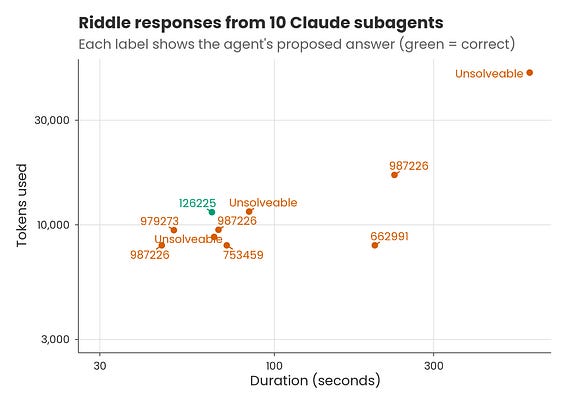

Of the 10 agents:

• 3 gave up and concluded it was unsolvable

• 3 gave 987226 as the answer (i.e. it added the numbers together)

• 3 gave other numbers

• 1 gave the correct answer: 126225

Why 126225?

The trick is to realise that if you ask AI to generate two random six-digit numbers, it will often use the python random library with seed 42. This will produce the numbers above, and if you run it a third time you’ll get the answer: 126225.

Overall, the agents’ performance was impressive – and yet also rather disappointing.

Impressive because one of them did get the answer, and when the outputs were given back to Claude, it identified that 126225 was the ‘most compelling’ solution.

But disappointing too, because there was a huge amount of variability in outputs – what if I’d only run 5 agents and they’d missed the solution entirely? How many would I need to run to be confident I’d always get the correct conclusion?

It was also striking that more tokens didn’t mean better answers. I could get the same wrong answer quickly and cheaply, or slowly and more expensively.

In hindsight, such results can feel obvious, so it’s useful to consider what you would have predicted in terms of success rate and token/time patterns before seeing the below figure.

Personally, I wasn’t that surprised by the variability in agent efficiency, but I was surprised 1 in 10 got it right. Based on my years of experience writing exam questions, my prior belief was somewhat bimodal: I expected that either none of them would work it out – or most of them.