The app for independent voices

Discussions of AI and creativity tend to be confused because they conflate two very different kinds of creativity.

Creativity in art is partly about emotional expression; artistic expression can be thought of as an inherently social process; value is largely dictated by scarcity; and judging the result is a matter of taste. For all these reasons, we are unlikely to consider AI to be creative, at least not in the same way as humans.

Creativity in science stands in sharp contrast. Its value is instrumental — producing scientific innovation. The end results can be objectively evaluated for the most part. There is a long-established understanding of creativity in such domains as a process of constrained search over conceptual spaces. There is no reason that AI will always be worse than humans at this. The status quo is that AI is currently better in some aspects of this (ease of generating quasi-random ideas) and worse at others (autonomously spotting abstract connections between seemingly unrelated concepts). There is a lot of technical low-hanging fruit and room for improvement.

None of this is to say that AI can, will, or should replace scientists — I've argued against that at length, with Sayash Kapoor (normaltech.ai/p/could-a…). But the reason is not because human scientists possess some magic spark of creativity that AI will forever lack.

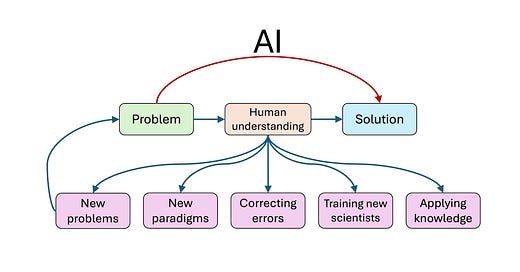

This can easily be misinterpreted, so let me emphasize: human judgment and taste will remain essential in problem selection, framing, interpretation, and the sociological competition between incommensurable Kuhnian paradigms. But when it comes to AI adoption as part of the core "puzzle solving" activity of "normal science", the current creativity barrier might not always remain, and if it goes away the day-to-day activity of science might look quite different. The cognitive offloading of creativity will likely have some big downsides that we haven't appreciated yet.