Make money doing the work you believe in

📢📢 A double launch today! We’re releasing a paper analyzing the rapidly growing trend of “open-world evaluations” for measuring frontier AI capabilities. We’re also launching a new project, CRUX (Collaborative Research for Updating AI eXpectations), an effort to regularly conduct such evaluations ourselves. cruxevals.com

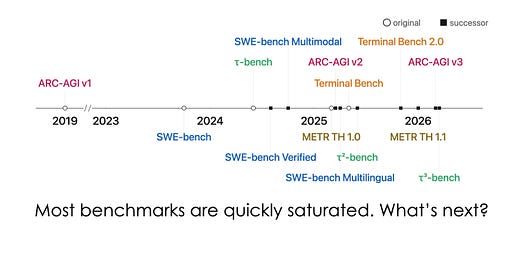

I think open-world evals are the most important development in AI evaluation over the past year. Our paper explains why we need them, what they can and can’t tell us, and how to do them well.

In CRUX #1, we tasked an agent with building and publishing a simple iOS app to the Apple App store. The paper has many “lessons from the trenches” from running this experiment. We hope you find it interesting! CRUX #2 will be about AI R&D automation.