Make money doing the work you believe in

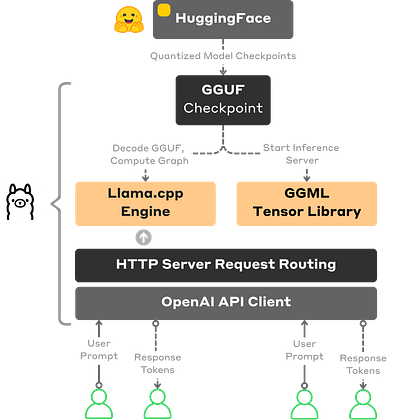

One thing I keep seeing in local LLM discussions: people collapse GGUF, GGML, and llama.cpp into one mental bucket.

That makes the stack more complex than it really is.

In Ollama, LMStudio, and many local AI Inference Engines:

GGUF is the model format.

GGML is the tensor layer doing the actual computation.

llama.cpp is the runtime that parses the model, builds the graph, loads weights, manages the KV cache, and runs sampling.

Just a simple 3-layer split that clears all the confusion.

If you’re looking for a full deep-dive on Local AI Inference with Ollama:

Apr 16

at

8:30 AM

Relevant people

Log in or sign up

Join the most interesting and insightful discussions.