The app for independent voices

𝗕𝗮𝘁𝗰𝗵 𝘃𝘀. 𝗦𝘁𝗿𝗲𝗮𝗺 𝘃𝘀. 𝗟𝗮𝗺𝗯𝗱𝗮: 𝗧𝗵𝗲 𝗗𝗮𝘁𝗮 𝗔𝗿𝗰𝗵𝗶𝘁𝗲𝗰𝘁𝘂𝗿𝗲 𝗖𝗵𝗲𝗮𝘁 𝗦𝗵𝗲𝗲𝘁

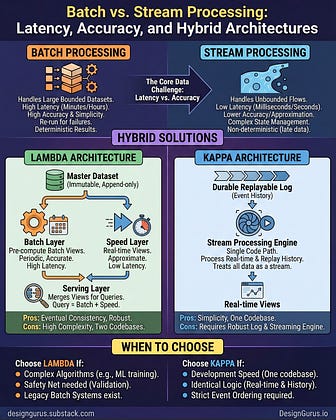

In system design, we are always fighting a war between Latency (Speed) and Accuracy (Completeness).

You want data instantly, but you also want it to be perfect. Usually, you can't have both.

Here is how the top 3 patterns solve this trade-off:

𝟭. 𝗕𝗮𝘁𝗰𝗵 𝗣𝗿𝗼𝗰𝗲𝘀𝘀𝗶𝗻𝗴 The "Slow & Steady" approach.

How it works: Collects data over time and processes it in big chunks (e.g., every 2 hours).

Pros: High accuracy, simple logic, easy to fix errors (just re-run the batch).

Cons: High Latency. You are always looking at old data.

𝟮. 𝗦𝘁𝗿𝗲𝗮𝗺 𝗣𝗿𝗼𝗰𝗲𝘀𝘀𝗶𝗻𝗴 The "Need for Speed" approach.

How it works: Processes every event the instant it arrives.

Pros: Low Latency. Real-time dashboards.

Cons: Complexity. Handling late-arriving data and ensuring "exactly-once" processing is hard.

𝟯. 𝗧𝗵𝗲 𝗟𝗮𝗺𝗯𝗱𝗮 𝗔𝗿𝗰𝗵𝗶𝘁𝗲𝗰𝘁𝘂𝗿𝗲 The "Best of Both Worlds" approach.

How it works: Runs BOTH Batch and Stream in parallel.

Batch Layer: Processes all history perfectly.

Speed Layer: Processes recent data quickly (approximate).

Serving Layer: Merges the two (Total = Batch + Speed).

The Trade-off: You have to write your code twice (Two codebases).

If you want to simplify Lambda, look into the 𝗞𝗮𝗽𝗽𝗮 𝗔𝗿𝗰𝗵𝗶𝘁𝗲𝗰𝘁𝘂𝗿𝗲, which treats everything (even history) as a stream.

Read full post: designgurus.substack.co…

#SystemDesign #DataEngineering #BigData #Architecture #TechTips