Make money doing the work you believe in

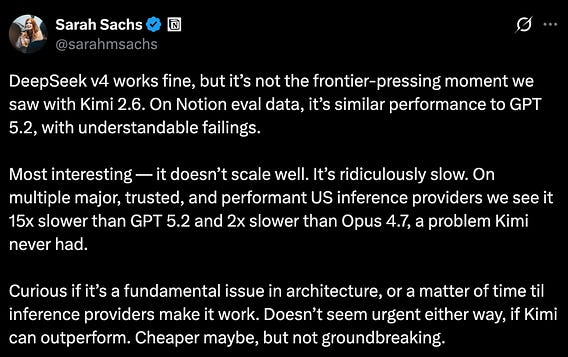

DeepSeek-v4 is a lot slower than other models, which has perplexed many users.

I think the main issue is the architecture. DeepSeek uses a series of attention optimization techniques that considerably reduce the memory costs of running long-context tasks.

However, the hardware that the model runs on is not optimized for those techniques, which slows down both the prefill and decode phases.

May 5

at

1:21 PM

Relevant people

Log in or sign up

Join the most interesting and insightful discussions.