Make money doing the work you believe in

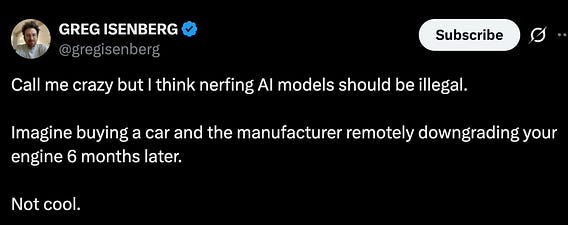

The "nerfing" problem is one that the AI labs created for themselves:

- Their transparency is at an all-time low. They virtually share zero information about model architecture, training data, harness, system prompt, etc.

- Users are left to speculate on what is happening behind the scenes

- Model/harness updates are not communicated clearly to users

As a result, when one thing that used to work no longer works, users quickly assume that the AI lab nerfed the model to compensate for compute capacity constraints.

May 7

at

5:46 PM

Relevant people

Log in or sign up

Join the most interesting and insightful discussions.