The app for independent voices

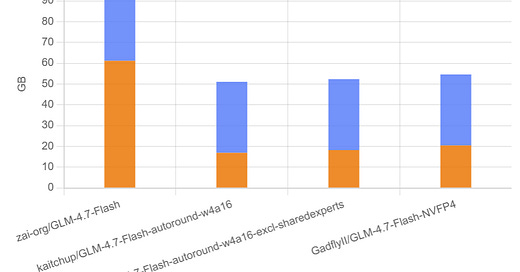

I evaluated 4-bit variants of GLM-4.7 Flash.

All of them look safe to use, with only a small (few-percent) accuracy drop on long-context tasks, especially those that require a lot of reasoning tokens.

At ~17 GB, you can run the model at full context length on a 24 GB GPU.

More results, including with reasoning disabled, here:

Feb 10

at

1:41 PM

Relevant people

Log in or sign up

Join the most interesting and insightful discussions.