Make money doing the work you believe in

Google Interview Question:

What’s the difference between causal attention and bidirectional attention?

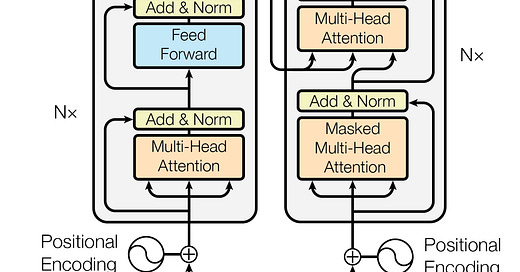

Causal attention and bidirectional attention differ in what each token is allowed to “look at” when forming its representation. The choice affects what the model can learn and what tasks it is suitable for.

In causal attention, a token can only attend to tokens at positions before it. It cannot see the future. This creates a strict left-to-right flow of information, so the model learns to predict the next word based only on past context. This setup is essential for generative language models like GPT. If the model were allowed to peek at future tokens, it would cheat during training and would not generalize properly at inference time. Causal attention makes the model behave like a writer: it only knows what has been written so far, and it must guess the next word based on that history.

In bidirectional attention, a token can attend to both past and future tokens. Every word in the sentence can look at the full context. This is what models like BERT use. Because each token sees its entire neighborhood, the model becomes very good at understanding sentence structure, resolving ambiguity, detecting relationships between words, and performing tasks like classification or question answering. Bidirectional attention lets the model behave like a reader: it has access to the whole sentence and can interpret meaning holistically.

More follows and other question around LLMs are covered in this article. So make sure to check it out!