The app for independent voices

Most agent failures in production are not model failures. They come from dropping a probabilistic component into a system with no structure, no validation, and no visibility.

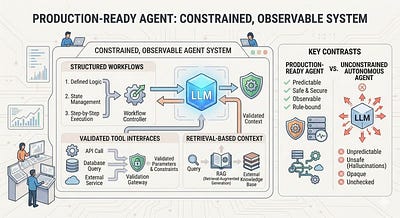

Let's have a look at what it takes to "architect" a production read agent.

A production-ready agent starts by constraining control flow. Instead of an open-ended loop, you define a finite set of states or a DAG where each step has a clear contract. For example: ClassifyIntent → FetchContext → DecideAction → ExecuteTool → Verify → Respond. The LLM is only used inside specific steps and is required to produce structured outputs with a schema. That allows the system to validate before proceeding. If the output is invalid, you retry, simplify, or fall back. This is what turns agent behavior from unpredictable to testable.

Once the flow is bounded, you harden tool interactions. Tools are exposed as typed interfaces with strict input and output validation, timeouts, and retry policies. For example, get_order(order_id: string) rejects malformed inputs before execution, and process_refund may require an explicit confirmation state to prevent unintended actions. You also reduce risk by limiting tool choice. Instead of exposing 20 tools, you pre-filter to a small set based on rules or retrieval.

Next is memory and context, treated as a retrieval pipeline rather than prompt stuffing. A common pattern is: generate a query, retrieve top-k documents, re-rank them, and pass only the most relevant results into the prompt. For instance, a coding agent might retrieve 20 files, re-rank to 5, and ignore the rest. This keeps context focused and reduces hallucination. Writes to memory are also controlled through filtering and deduplication.

Finally, you add observability and evaluation, which is what makes the system reliable over time. Every step logs prompts, tool calls, latency, and cost so you can trace and replay failures. You run evals on real workloads to catch regressions before deployment, and enforce safety outside the model with permission checks and output filters.

Key Takeaway -

Constrain the flow, enforce schemas at every boundary, rank and filter context, and instrument everything. The LLM is just one component inside a controlled system.