Make money doing the work you believe in

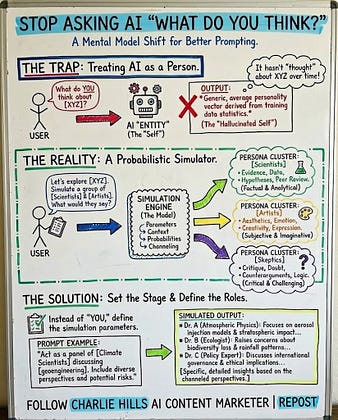

Stop asking AI "What do you think?"

There is no "you."

Andrej Karpathy nailed it:

LLMs aren't entities with opinions.

They're simulators.

When you ask "What do you think about X?"

↳ A "hallucinated self" that never actually thought

↳ You force it to adopt a generic personality

↳ Derived from training data statistics

The fix is simple:

Instead of: "What do you think about remote work?"

Try: "What would a group of HR directors, remote employees, and office managers say about remote work? What would they debate?"

The model can channel many perspectives.

It just can't form its own.

Here's how to use this:

1. Define the simulation

"Act as a panel of [experts] discussing [topic]"

2. Assign distinct personas

Give each one a role, background, or bias

3. Let them disagree

Ask for counterarguments and debates

4. Extract the synthesis

"What would they agree on? Where do they differ?"

You'll get richer, more nuanced answers.

Not because the AI got smarter.

Because you stopped asking it to be something it's not.

Repost ♻️ to help someone prompt better.