The app for independent voices

The attention mechanism in a standard Transformer is incredibly good at what it does: fetching highly specific information from anywhere in the prompt. The problem isn’t the attention operation itself; the problem is the storage bill it racks up.

The compute required for a standard Transformer layer (dense attention plus dense MLP) roughly scales as: 24nd² + 4n²d. At deep networks and very long contexts, this can ruin your finances.

There are several ways to address this issue. One of the most interesting is DeepSeek-V2’s Multi-head Latent Attention (MLA). Instead of storing massive Key and Value tensors for every token, what if we just store a highly compressed “summary” vector?

In standard attention, you cache the Keys and Values. In MLA, you project the token’s information down into a much smaller latent vector. During the decode phase, the GPU only appends this tiny vector to the cache. When it needs to calculate attention, it rapidly “up-projects” or reconstructs the Keys and Values on the fly.

By replacing the large 2 g d_k term with a much smaller compressed dimension d_c, DeepSeek reported a staggering 93.3% reduction in KV cache size — “Compared with DeepSeek 67B, DeepSeek-V2 achieves significantly stronger performance, and meanwhile saves 42.5% of training costs, reduces the KV cache by 93.3%, and boosts the maximum generation throughput to 5.76 times.”

Unfortunately, this is not a free lunch. You are trading memory for compute. Reconstructing the keys and values requires an extra matrix multiplication. Furthermore, low-rank compression breaks traditional position embeddings like RoPE (Rotary Position Embedding). Applying RoPE to compressed keys increases their mathematical variance, degrading accuracy unless you implement careful “decoupled” RoPE strategies.

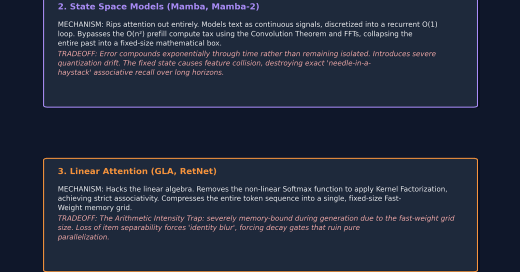

There are several other strategies to address the costs of Self Attention, which we will discussed here--