Make money doing the work you believe in

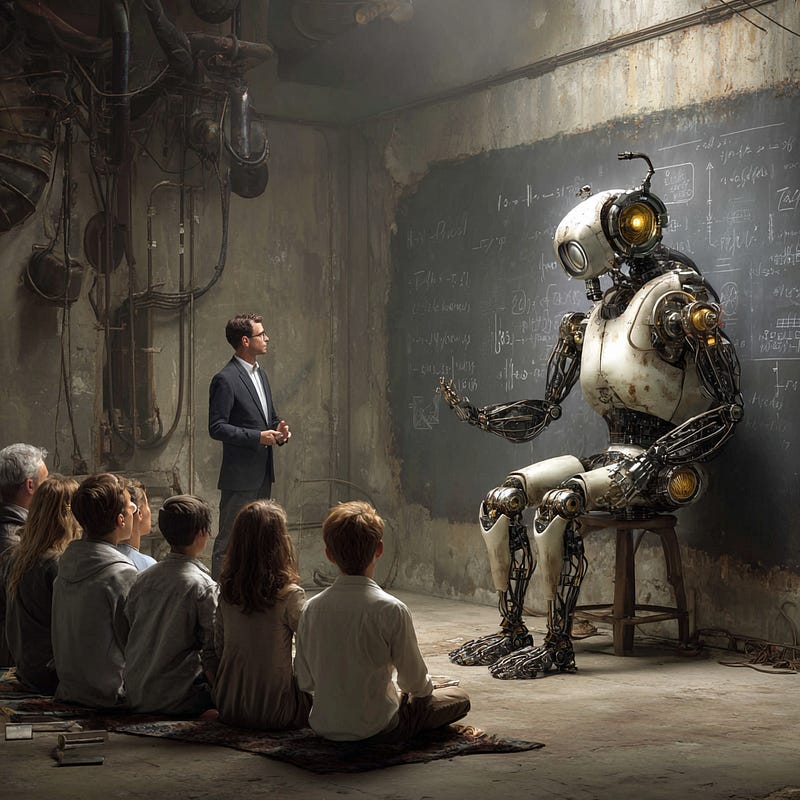

IT'S NOT MAGIC, IT'S MATHEMATICS

#GAIGlossary #constraints #Tokens #Hallucination

Most people talk about AI like it's magic or menace. It's neither. It's a system. Once you understand the core mechanics, the illusion drops away and the leverage appears.

—————————————

Start with tokens. AI doesn't read words. It processes chunks of language, fragments that carry structure and meaning. Everything you do with AI is shaped at that level. Clarity in, clarity out.

Then there's the context window. Think of it as working memory. The model can only "see" a limited amount of information at once. Overflow it, and it forgets. Not randomly. Systematically. If you don't manage context, you don't control outcomes.

Temperature is controlled randomness. Low temperature produces stable, predictable outputs. High temperature introduces variation, creativity, and risk. You are not just asking questions. You are tuning a probability engine.

Hallucination isn't a glitch. It's the system doing exactly what it always does, generating coherence, in cases where coherence and accuracy diverge. AI doesn't retrieve truth. It generates plausibility. If you don't supply constraints, it will invent them.

RAG (retrieval-augmented generation) grounds the system. It pulls in relevant external information and feeds it into the model at runtime. This is how AI becomes useful in real workflows.

Alignment is the incentive layer. The system isn't just generating coherent output, it's generating coherent output that has been shaped, through training, to match what humans reward. This shaping is a constraint, and like every other constraint in this stack, it determines what you get back. Misaligned incentives produce misaligned outputs, no matter how fluent the prose.

—————————————

Tokens define structure. Context defines scope. Temperature defines behavior. Retrieval defines knowledge. Alignment defines intent. AI is not thinking. It is simulating understanding under constraint.

Tokens: Language is discretized into machine-operable units

Context Window: Output is bounded by working memory

Temperature: Controlled entropy in generative systems

Hallucination: Unconstrained inference under probabilistic pressure

RAG: Externalized memory injection

Alignment: Incentive-shaped output under constraint

Control the constraints, and you refine the results.

—————————————

AI doesn’t engage in cognition as you know it. No matter how fluently it imitates human language, it doesn't understand what you are talking about no matter how cogent it may sound.

It resolves probabilities within boundaries you define through your prompting. It's not magic. It's mathematics.

—————————————

#StrangeAttractor #CognitiveDissident