The app for independent voices

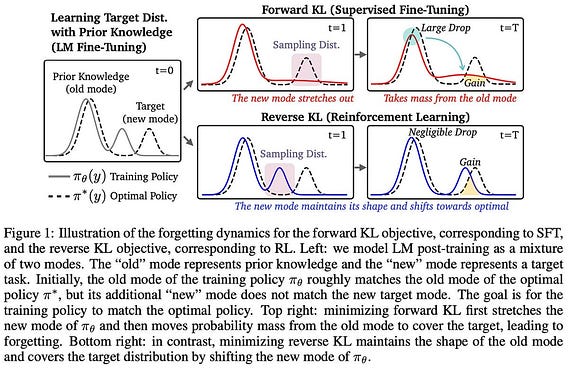

The SFT and RL training objectives can be interpreted in terms of KL divergence. Up to a constant, SFT minimizes forward KL divergence, while RL minimizes reverse KL divergence. This equivalence provides intuition for the unique mechanics of these learning algorithms.

Forward & Reverse KL Definition

The KL divergence is a measure for the divergence / difference between two probability distributions. For two probability distributions P and Q, we can define a forward and a reverse KL divergence as follows:

Forward: D_KL(P || Q) = E_{x ~ P} [log(P(x)) - log(Q(x))]

Reverse: D_KL(Q || P) = E_{x ~ Q} [log(Q(x)) − log(P(x))]

In the context of LLMs, our probability distributions are usually probability distributions over completions sampled from the LLM. A key difference between forward / reverse KL divergence lies in the sampling–the distribution from which we sample for the expectation changes. Depending on if we are using forward or reverse KL, we are either sampling from our dataset (offline) or sampling from the LLM itself (on-policy / online). We will directly see this concept used in the context of SFT and RL training below.

SFT ≈ forward KL

Let’s call our data distribution p*, and we can sample an example from our SFT dataset as x ~ p*. With this notation, the training objective for SFT is the negative log-likelihood:

E_{x ~ p*} [-log(p_t(x))]

Then, we can show the following for the relationship between this objective and the forward KL divergence:

D_KL(p* || p_t) = constant - E_{x ~ p*} [log(p_t(x))]

Where the right-hand-side here is our SFT objective, so SFT == forward KL divergence up to a constant. Minimizing both functions gives us the same result!

RL ≈ reverse KL

Let’s denote our current policy in RL as p_t and our reference policy as p_ref. We are trying to maximize the following objective:

E_{x ~ p_t} [reward(x)] - beta D_KL(p_t || p_ref)

Where beta is the scaling factor for KL regularization. Note that this KL divergence is a regularization term in the RL objective and is unrelated to the relation we are exploring between KL and our training objectives. From here, we can show the following:

D_KL (p_t || p) = - (1 / beta) E_{x ~ p_t} [r(x)] + D_KL(p_t || p_ref) + constant

As we can see, this is the reverse KL divergence relative to the SFT expression. And, the quality here shows that this expression is equal to our RL objective (plus a constant and a scaling factor of 1 / beta).

Mode-Seeking versus Mode-Covering

What does this actually tell us about these training objectives?

SFT minimizes negative log-likelihood over a dataset, which is equivalent to minimizing the forward KL divergence. This is a mode-covering objective. Our model is heavily penalized for assigning low probability to any completion that is found in the data—the model must “spread” its probability mass across all possible completions or modes in the data.

On the other hand, RL maximizes the rewards of on-policy completions. This is equivalent to a reverse KL objective and is mode-seeking. The model emphasizes high-reward outputs, even at the cost of ignoring some output modes.

In SFT, the model’s loss increases exponentially if we assign near-zero probability to any completion in the dataset—this is due to the shape of the negative log-likelihood curve! Such a property is not true of RL, as we are simply maximizing the reward of on-policy completions. Assigning near-zero probability to a completion will prevent this particular completion from being sampled during RL, but reward can still be maximized over completions that are covered.