Make money doing the work you believe in

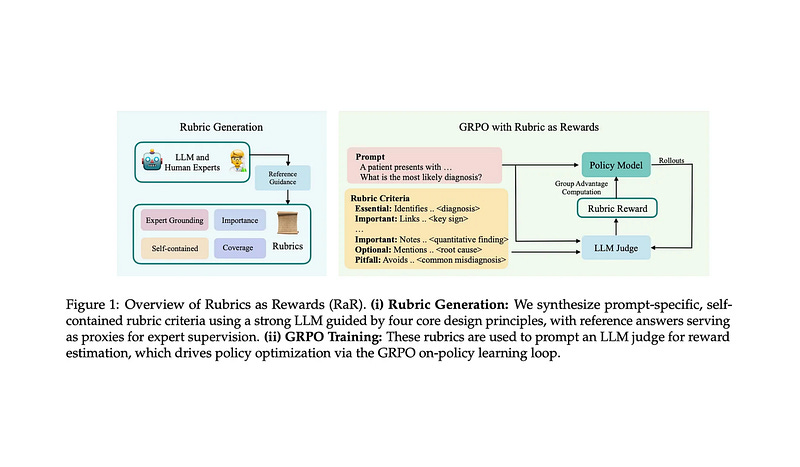

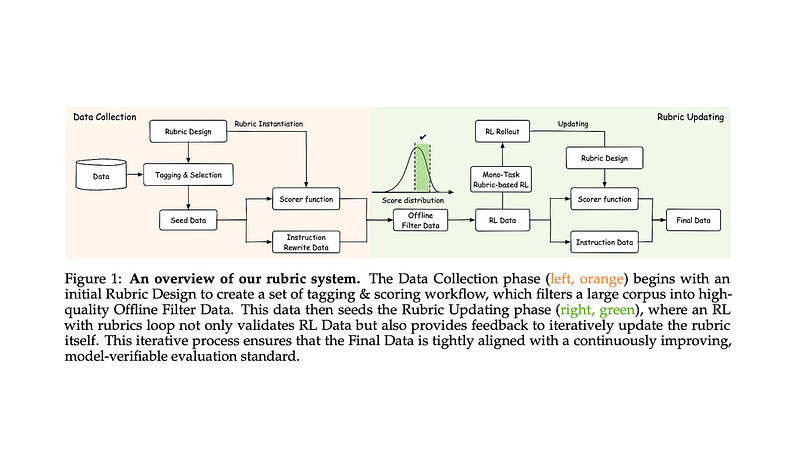

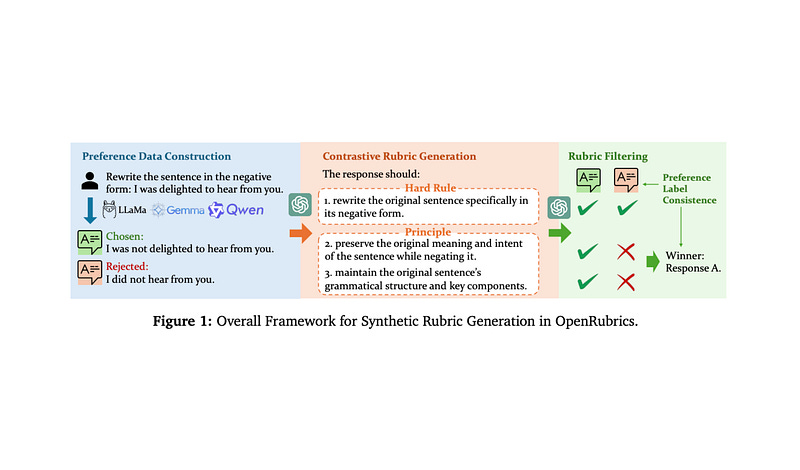

I've been reading a lot about rubrics-as-rewards (RaR) for RL. Some of my favorite papers (so far):

Most of the added technical complexity of RaR is less related to RL and more related to reward modeling. If we can get a reliable reward signal, RaR works well, but teaching a model to perform granular / instance-level evaluation is tough. Generalizing these evaluation capabilities across arbitrary domains is even tougher (especially those that are highly subjective). Our reward model also needs to avoid hacking in large-scale RL runs.

In my opinion, new developments in this space are likely to come from advancing the frontier of (generative) reward models rather than RL. So much to be done.

Feb 4

at

4:49 AM

Relevant people

Log in or sign up

Join the most interesting and insightful discussions.