Make money doing the work you believe in

RL with evolving rubrics (RLER) in Dr. Tulu is a great step in the direction I expect Rubrics-as-Rewards (RaR) to go.

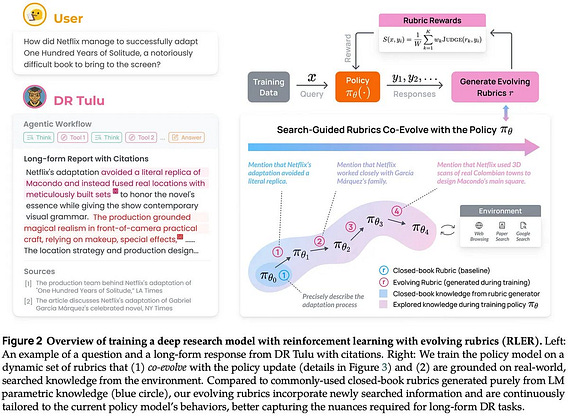

At a high level, the goal of using rubrics for RL is to generalize RL with verifiable rewards to non-verifiable domains. Instead of using deterministic rules, we can use an LLM judge equipped with a detailed scoring prompt (i.e., a rubric) to provide our reward signal for RL.

In rubric-based RL setups, an LLM generates a per-prompt rubric. The rubric is an itemized set of criteria (usually pass / fail rules that can be evaluated with an LLM) plus weights so the criteria can be aggregated into a single score. This structure makes rewards more reliable, interpretable, and granular than a single holistic judgment (e.g., vanilla LLM-as-a-Judge).

RLER goes further by maintaining a rubric buffer per prompt throughout training. It starts with a set of search-grounded rubrics generated by an LLM with internet search tools, which is especially important for Deep Research tasks (i.e., the focus of Dr. Tulu).

At each training step, an LLM proposes new / evolved rubrics using the prompt, the GRPO rollout group, and the current active rubrics as context. It can add:

- Positive rubrics that capture newly discovered, relevant knowledge or behaviors not covered yet by any rubric.

- Negative rubrics that target recurring failures or undesirable behaviors (e.g., reward hacking).

By allowing rubrics to evolve, we let the evaluation adapt to on-policy behavior: when the policy shifts (e.g., toward reward hacking), the rubric can shift with it.

To keep the buffer from growing unbounded, RLER maintains an active set of rubrics that always includes the initial search-based rubrics. The remaining slots go to the most discriminative rubrics, as measured by the standard deviation of rubric rewards over the GRPO group. Rubrics with zero variance in their reward signal over the group are dropped, and the rest are ranked to select the top-K.

The key appeal of this approach is that you don’t need to fully specify a perfect rubric upfront. You seed training with a reasonable set of initial criteria, then let the system refine the rubric as it observes what the policy actually does.