Make money doing the work you believe in

Rubric-based RL is one of the most active topics in AI research because it extends the benefits of large-scale RL training to non-verifiable domains. Here’s how it works…

For full details, see my new writeup on this topic:

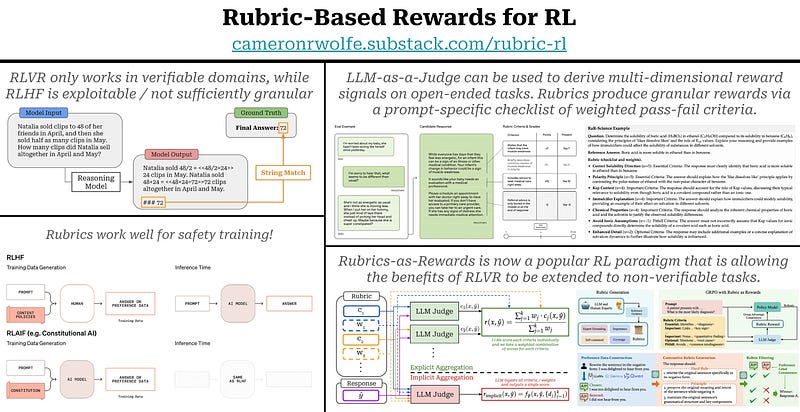

RLVR has driven a lot of recent advancements in reasoning models, but verifiable rewards have limitations—the same properties that make RLVR reliable confine it to domains with clean, automatically-checkable outcomes (e.g., math and code). Many important applications (e.g., creative writing or scientific reasoning) are not verifiable, making RLVR difficult to apply directly.

Reward models & RLHF. We typically turn to RLHF for training LLMs in open-ended settings. RLHF replaces deterministic verifiers with a learned reward model trained on preference data. We can easily collect preference data in any domain, but this approach has limitations:

- A large volume of preference data must be collected.

- We lose granular control over the alignment criteria—preferences are expressed in aggregate over a large volume of data rather than via explicit criteria.

- The reward model can overfit to artifacts (e.g., response length, formatting, etc.) and generally introduces more risk of reward hacking.

LLM-as-a-Judge & Rubrics. Instead of training a reward model, we can use a strong LLM or reasoning model directly as the evaluator—just prompt the model to score an output (i.e., an LLM-as-a-Judge setup). For complex, open-ended tasks, the reward signal tends to be multi-dimensional. So, rather than asking for one holistic score, we can break the evaluation into a set of explicit scoring criteria and score each criterion separately. This criterion checklist + scoring guidance is called a rubric. Unlike standard LLM-as-a-Judge, rubrics are often unique to each prompt being evaluated. The high level of scoring granularity helps improve reliability.

Early attempts. The idea of using rubrics as a reward signal for training LLMs is not new. This approach was frequently used for safety training (e.g., Constitutional AI and Deliberative Alignment). Safety criteria are constantly changing, which means we would have to constantly collect new preference data to apply RLHF. Instead, we can provide the safety criteria as a rubric to a powerful reasoning model and derive the reward signal from this model.

Rubrics-as-Rewards is now a very popular research topic that aims to extend RLVR to non-verifiable domains. The approach is simple, we just:

1. Generate (typically using an LLM with optional human intervention) a prompt-specific rubric for each prompt in our dataset.

2. Use an LLM judge to assign a reward to on-policy completions using this rubric.

3. Perform standard RL training (e.g., using GRPO) using the judge model’s rubric scores as the reward.

Compared to verifiable rewards, LLM judges are more prone to reward hacking. Much of the challenge for rubric-based RL lies in increasing the robustness of our reward signal. We want our judge and rubric to generalize well to new domains and avoid exploitation.