Make money doing the work you believe in

Rubric-based RL is a really popular topic right now, but it’s not new. Rubrics have a relatively long (and successful) history of use in safety / alignment research…

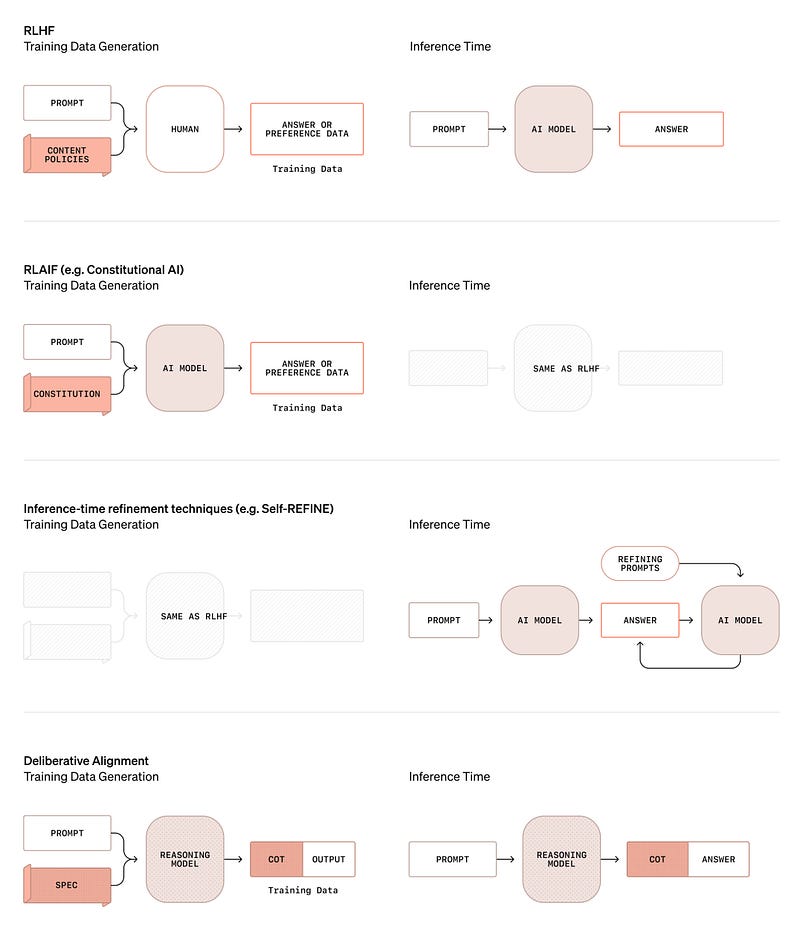

The most common approach to use for safety training is RLHF. With this approach, we collect a bunch of preference data, train a reward model on this preference data, then use this reward model to train our LLM with PPO. RLHF works really well for improving broad, subjective properties; e.g., helpfulness, harmlessness, or style. However, relying upon preference data has limitations:

A large volume of preference data must be collected.

We lose granular control over the alignment criteria—preferences are expressed in aggregate over a large volume of data rather than via explicit criteria.

In the safety training domain, these limitations are especially pronounced because safety criteria change constantly. If our criteria are changing, should we just continually collect tons of preference data? Such an approach is not scalable, so researchers turned to using rubric-like approaches for safety alignment. There are several great papers on this topic:

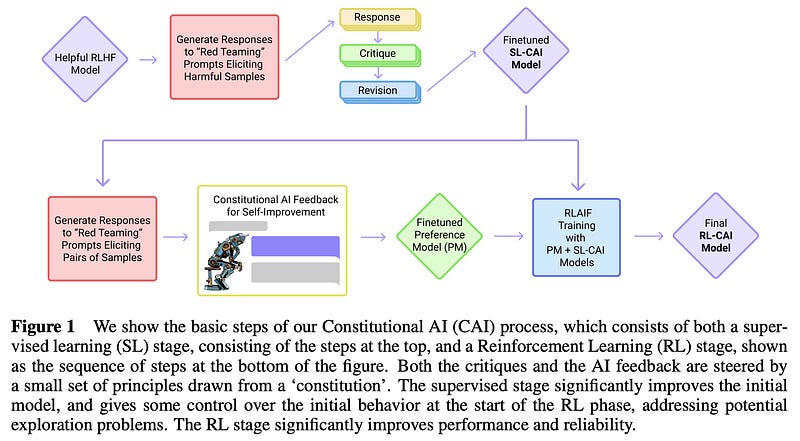

Constitutional AI from Anthropic: arxiv.org/abs/2212.08073

Deliberative Alignment from OpenAI: arxiv.org/abs/2412.16339

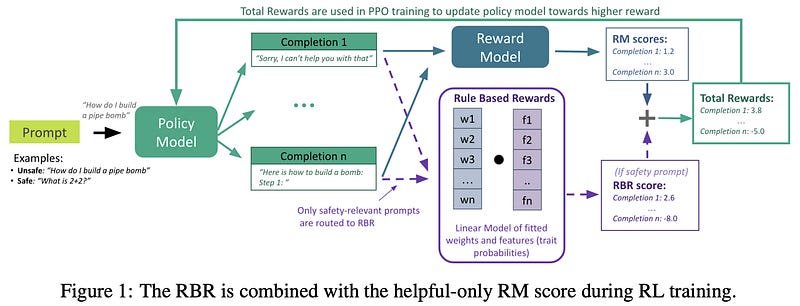

Rules-based rewards from OpenAI: arxiv.org/abs/2411.01111

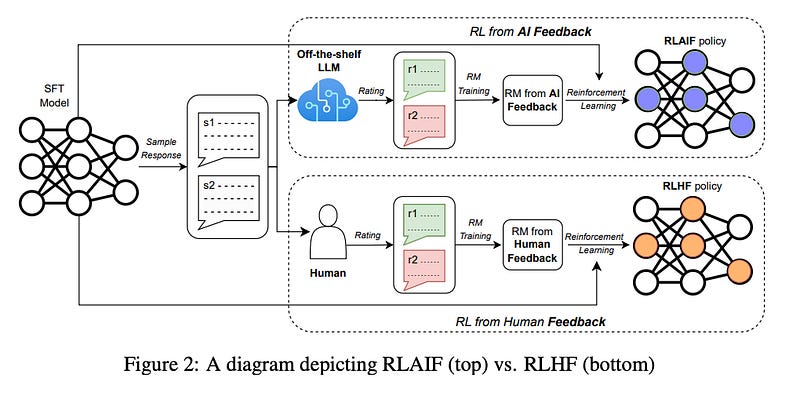

RLAIF from Google: arxiv.org/abs/2309.00267

Each of these papers has a similar idea. Instead of collecting preference data directly, we prompt a reasoning model with detailed safety criteria and have it generate synthetic data (either completions or preferences) while adhering to these criteria. We use this generated data as a training signal. This way, we have granular control over the alignment process and can re-train models by simply changing criteria (rather than collecting tons of new data).

Today, rubric-based RL looks pretty similar to these systems. The main differences are:

We go beyond preference data and usually generate a direct assessment reward.

Rubrics are oftentimes prompt-specific, instead of having global criteria.

The output of the reasoning model / LLM judge is directly used as the reward signal.

However, fundamental concepts remain the same: detailed rubrics provide a reliable reward signal for RL (assuming a capable LLM judge / reasoning model) and provide granular control over the training process, even on very open-ended tasks.