The app for independent voices

LLM evals are expensive, so it's common to sub-sample eval data to reduce costs and improve efficiency (especially in iterative tuning / ablation experiments). However, using the wrong sampling approach can lead to noisy or incorrect results...

Rank Correlation. When doing this, it's very common to use some form of rank correlation. The idea is simple: the subset we select is considered "good" if it preserves the model ranking observed on the full dataset. We can compute the rank correlation by:

- Evaluating all models on the full dataset.

- Computing a score per model.

- Ranking all models by their scores.

- Re-evaluating on a subset.

- Ranking all models by their subset scores.

- Aggregating ranks into two "rank vectors", where entry i is the rank of model i in both vectors.

- Computing the correlation between rank vectors.

Issues with Rank Correlation. Although rank correlations are commonly used, this approach can overfit on a particular evaluation suite. An evaluation subset can preserve model rankings while still having noisy data that does not genuinely capture capability differences. Additionally, rank correlations saturate quickly when we have clearly-defined capability classes of models (e.g., a model suite with a group of 7B, 32B and 70B models).

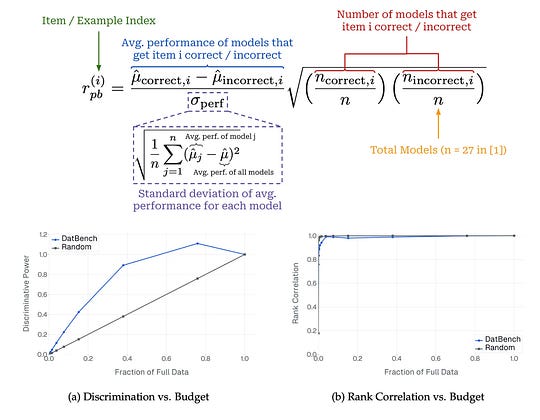

IRT Sampling. As a solution, DatBench proposes an item response theory (IRT)-based metric for selecting data subsets. For each data sample, they compute the point-biserial correlation (r_pb), which captures the relationship between scores on a single data point and global performance. The intuition is described below.

“An item with high r_pb is one that strong models consistently answer correctly and weak models consistently miss; conversely, a low or negative r_pb indicates a noisy item.” - DatBench paper

DatBench shows that selecting data based on this metric better optimizes for the discriminability of the eval subset. In the attached image, we see that r_pb based selection preserves 90% of total discriminability with only 40% of the data, whereas rank correlation saturates immediately.