Make money doing the work you believe in

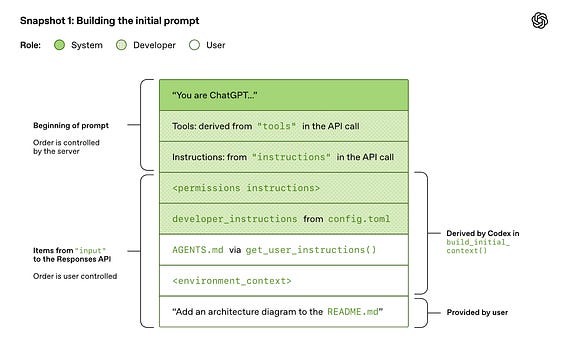

The prompt caching strategy for codex is really interesting. Obviously, the prompt for a coding agent is super long due to lots of boilerplate / system info. Before including the message from the user, the prompt contains all of the following:

- System message.

- Tool specs.

- Model / developer instructions (specified in config files or loaded by default, not a user-provided message).

- Permissions info.

Then, we finally have an agents file, info about the current environment, and the user’s request. This is a super long prompt but all of these components are necessary for the agent. Plus, coding agents tend to have very long sessions, so the prompt only gets longer from here.

This makes prompt caching (i.e., reusing computation from a prior inference call that contains an exact prefix match with the current prompt) extremely important.

“Generally, the cost of sampling the model dominates the cost of network traffic, making sampling the primary target of our efficiency efforts. This is why prompt caching is so important, as it enables us to reuse computation from a previous inference call. When we get cache hits, sampling the model is linear rather than quadratic.” - Codex Blog Post

Although this prompt is long, the information is organized hierarchically to optimize for prompt caching, which helps codex to deal with the cost of long prompts. As you go higher up the prompt, components become less likely—or even impossible in the case of any components that are controlled by the system—to change, either within a thread or across separate codex instance. For example, the system message will rarely change and most tools might be shared between tasks (e.g., the agent’s shell or web search tool), but developer instructions and agents files are usually project specific.

This prompt is crafted specifically to maximize the cache hit rate by ensuring every prompt. Interestingly, authors note that even when information from earlier in the prompt changes (e.g., tool specifications), they avoid directly editing prior information in the prompt because this would invalidate the prompt cache. Instead, they insert a new message explaining the change to the tool or instructions at the end of the prompt, ensuring the prompt cache is still valid.

This discussion demonstrates the importance of prompt caches for coding agents. These days, it is common to fully exhaust the context window of a coding agent, or even reach the context window several times by performing multiple compactions in a session. This leads to incredible long prompts, where a cache miss would be very disruptive to responsiveness / efficiency. As a result, codex has to be very careful to ensure prompts are always cached.