Make money doing the work you believe in

Here is a (brief) taxonomy of the three advanced prompt engineering techniques that are most commonly used/referenced…

Disclaimer: Basic prompting techniques (e.g., zero/few-shot or instruction prompting) are highly effective, but sometimes more complex prompts can be useful for solving difficult problems (e.g., math/coding or multi-step reasoning problems). Because LLMs naturally struggle with these problems (reasoning capabilities do not improve monotonically with model scale) most research on prompt engineering is focused upon improving reasoning and complex problem solving capabilities. Simple prompts will work for solving most other problems.

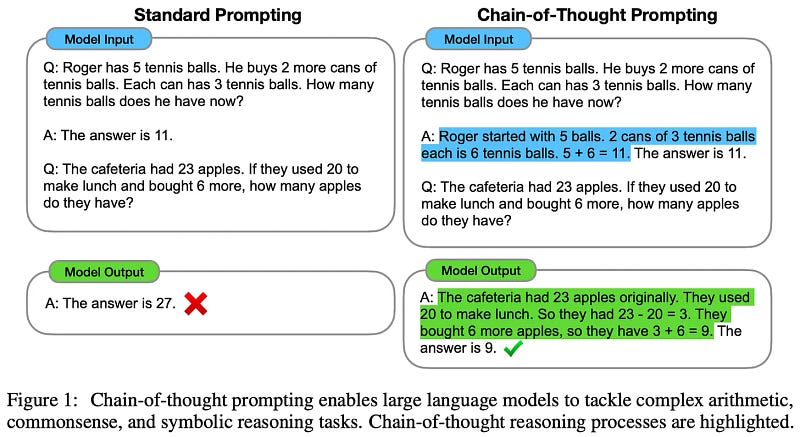

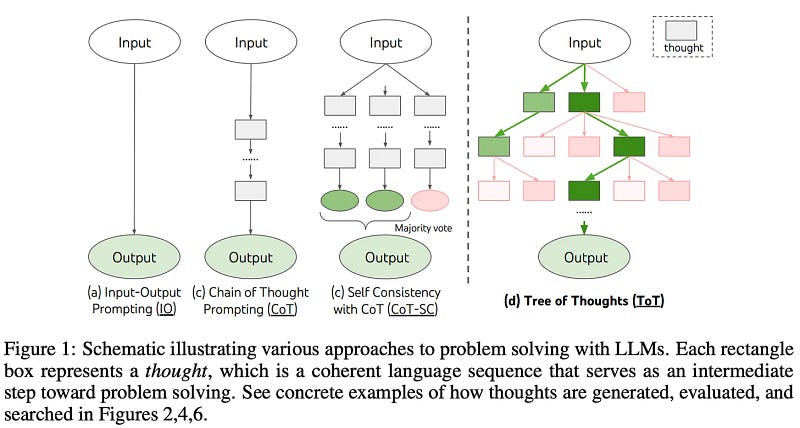

Chain of Thought (CoT) prompting [1] elicits reasoning capabilities in LLMs by inserting a chain of thought (i.e., a series of intermediate reasoning steps) into exemplars within a model’s prompt. By augmenting each exemplar with a chain of thought, the model learns (via in-context learning) to generate a similar chain of thought prior to outputting an answer. We see in [1] that explicitly explaining the underlying reasoning process for solving a problem actually makes the model more effective at reasoning.

CoT variants: Due to the effectiveness and popularity of CoT prompting, several extensions have been proposed:

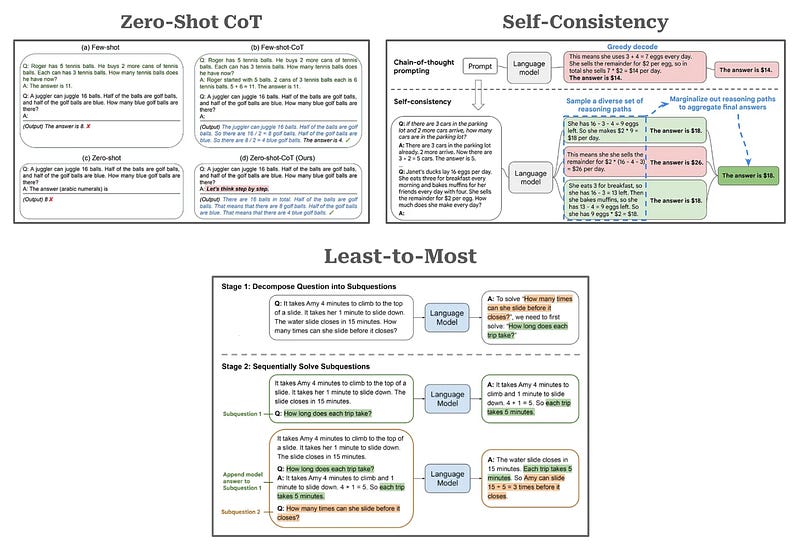

- Zero-Shot CoT [2]: eliminates few-shot exemplars and instead encourages the model to generate a problem-solving rationale by appending the words “Let’s think step by step.” to the end of the prompt.

- Self-Consistency [3]: improves the robustness of the reasoning process by independently generating multiple chains of thought when solving a problem and taking a majority vote of the final answers produced with each chain of thought.

- Least-to-Most [4]: breaks a problem into multiple parts, solves each part individually, and uses the solution of each sub-problem as context for the next sub-problem.

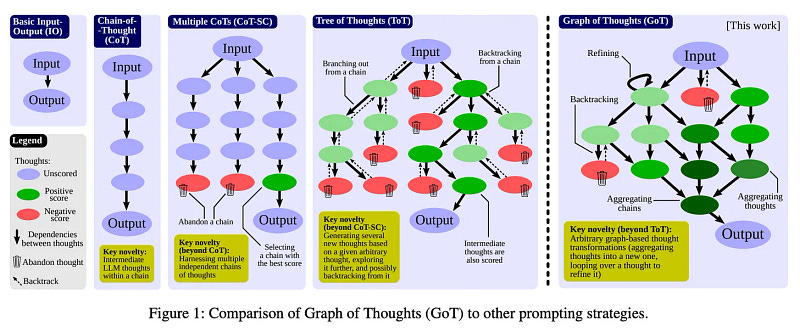

Tree of thoughts (ToT) prompting [5]: CoT prompting fails to solve problems that benefit from planning, strategic lookahead, backtracking, and exploration of numerous solutions in parallel. ToT prompting breaks a complex problem into a series of simpler problems (or “thoughts”). The LLM generates many thoughts and continually evaluates its progress toward a final solution via natural language (i.e., via prompting). By leveraging the model’s self-evaluation of progress towards a solution, we can power the exploration process with widely-used search algorithms (e.g., breadth-first search or depth-first search), allowing for lookahead/backtracking when solving a problem.

Graph of Thoughts (GoT) prompting [6, 7]: Later work generalized research on ToT prompting to graph-based strategies for reasoning. These techniques are similar to ToT prompting, but they make no assumption that the path of thoughts used to generate a solution is linear. We can re-use thoughts or even recurse through a sequence of several thoughts when deriving a solution. Multiple graph-based prompting strategies have been proposed. However, these prompting techniques—as well as ToT prompting—have been criticized for their lack of practicality. Solving a reasoning problem with GoT prompting could potentially require a massive number of inference steps from the LLM!

Bibliography: