Make money doing the work you believe in

Most of us probably understand next token prediction, but how exactly does an LLM generate its output?

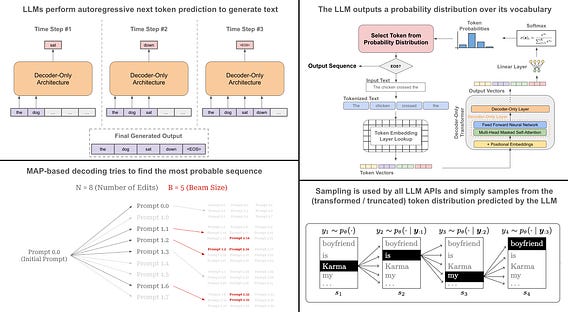

Next token prediction. LLMs are next token predictors. Given a sequence of input tokens, they predict the next token in the sequence. By following this process autoregressively (i.e., adding each output to the input sequence and repeating), we can generate a textual sequence.

What do LLMs predict? Although LLMs are next token predictors, they do not directly predict tokens. Rather, they predict a probability distribution over the space of possible tokens. Then, we can derive the next token based on the probabilities in this distribution.

Decoding strategies. There are two primary ways that we can pick tokens from the distributions predicted by the LLM.

1. Maximum a posteriori (MAP): find the most probable textual sequence.

2. Sampling: simple sample tokens from the LLM’s distribution.

MAP includes strategies like greedy selection (i.e., repeatedly selecting the most probable next token) and beam search, which is similar to greedy selection but maintains several potential sequences in parallel. MAP-based decoding strategies were common for narrow tasks like translation, but they are not used anymore due to issues with producing repetitive, short, and atypical output sequences.

Sampling strategies are used universally by LLMs. These strategies simply sample tokens from the token distribution produced by the LLM. Usually, this distribution is either transformed (e.g., via the temperature setting) or truncated (e.g., via top-K or top-P) to avoid sampling low probability tokens. These sampling parameters are provided within all LLM APIs. Sampling yields much more regular and high-quality output in open-ended tasks compared to MAP.