The app for independent voices

DeepSeek’s R1 learns to reason via pure RL with no / minimal SFT. Here’s how to understand the role of SFT and why it is used (or not) for reasoning models…

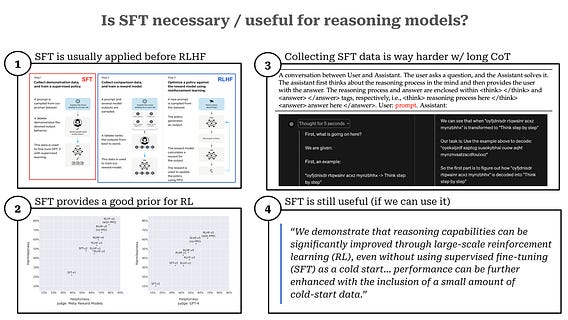

TL;DR: R1 shows that we can learn to reason via pure RL. But, the compute costs are high–discovering a good solution requires a lot of exploration during the RL phase. SFT is still useful for reasoning models, but collecting data is harder due to the long CoT used by reasoning models. R1 generates a small amount of “cold start” SFT data synthetically, which removes model deficiencies and accelerates RL convergence.

Model alignment. The traditional post training approach for LLMs has two parts:

1. Supervised finetuning (SFT): train the model on examples of “good” completions using a supervised next token prediction objective.

2. Reinforcement learning from human feedback (RLHF): train the LLM based on human preference pairs to facilitate alignment.

Each of these components play an important role. SFT teaches the model correct style / formatting (e.g., chat templates, instruction-following, etc.), while RLHF teaches the model to produce outputs that humans deem preferable.

Why is SFT important? For a standard LLM, SFT provides a high-quality prior (or starting point) for RLHF. If we applied RLHF directly to the base model, the learning process would be much less efficient. Put simply, the LLM would have to do a lot more “exploring” to find a good policy. By applying SFT, we get the model very close to the final solution that we want, then RLHF applies the finishing touches in terms of model quality.

Where does SFT data come from? Usually, data for SFT is either synthetically generated or manually created by humans. Generally, collecting data for SFT is expensive (both in terms of time and money). We have to manually write a good response from scratch for the LLM!

Reasoning models like R1 have a very particular style of output. To answer a prompt, they begin by outputting a massive chain of thought, usually encapsulated by a special token (e.g., the "<think>" token). Then, the model generates an answer separately, which is again encapsulated by a special token (e.g., the "<answer>" token).

SFT + Reasoning. Given this style of output, we might ask ourselves: How would we collect SFT data for a model like this? We definitely can’t just ask humans to manually write out long CoT. This would be way too time consuming and expensive! Our only option is to generate this data synthetically, but:

- It may still be difficult to get the model to generate an output with this particular style.

- Correctly verifying such long outputs is still quite difficult.

- We don't want to hinder the model's ability to explore during the RL phase too much.

R1-Zero - No SFT. Given the additional complexity of collecting SFT for reasoning models, authors at DeepSeek first try to avoid SFT altogether! From these experiments, we see that such reasoning abilities naturally emerge from pure RL, which is a completely incredible finding.

The resulting R1-Zero model has some downsides compared to standard LLMs that undergo both SFT and RL-based finetuning! For example, the model’s readability is poor and it tends to mix languages incorrectly (i.e., using English for reasoning and responses even if the query is non-English).

“Unlike DeepSeek-R1-Zero, to prevent the early unstable cold start phase of RL training from the base model, for DeepSeek-R1 we construct and collect a small amount of long CoT data to fine-tune the model as the initial RL actor.” - from R1 paper

R1 - Cold Start w/ SFT. The final R1 model creates a small dataset (few thousand examples) for SFT prior to RL. This data is synthetically generated (e.g., by using rejection sampling on an RL checkpoint). When we train over some SFT prior to RL (i.e., a “cold start”), we provide a better prior to RL, which eliminates instability during the initial phases of RL training. As a result, convergence is accelerated for RL and the final model avoids side effects observed in R1-Zero.