The app for independent voices

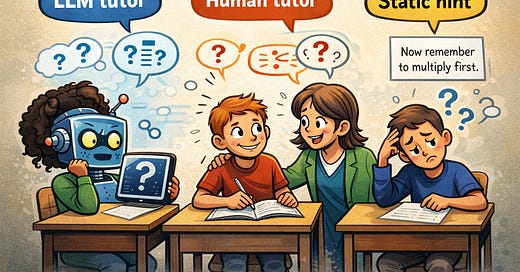

Latest from us - the most promising LLM tutor so far!

I've written a summary of a new paper by Google and Eedi. Their LLM tutor dramatically reduced (although didn't eliminate) hallucinations.

In total there were 5 errors out of 3,617 messages sent by the LLM. Would a human teacher make fewer errors?

Jan 11

at

10:07 PM

Relevant people

Log in or sign up

Join the most interesting and insightful discussions.