Make money doing the work you believe in

From Static Visualization to Interaction: My Contribution Through Hand Gestures

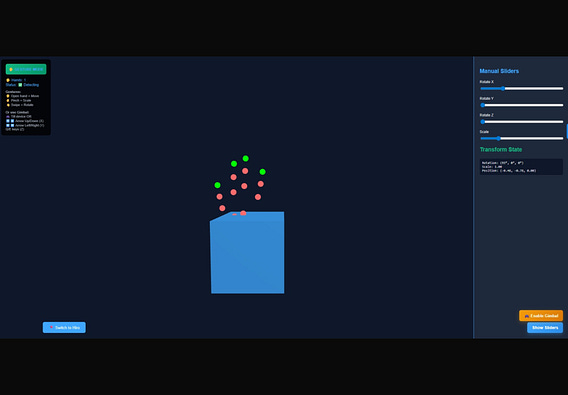

When we started building the Graphics Pipeline Visualiser, the goal was simple: make a complex concept intuitive. The graphics pipeline itself is already a layered transformation—from 3D data to final pixels on screen —but traditional learning methods reduce it to static diagrams and sliders.

That approach works… until you try to feel the transformations.

This is where my contribution comes in.

🚀 The Problem: Interaction Was Still 2D

Even though the platform visualized 3D transformations, the interaction layer was still limited:

Sliders controlled scale, rotation, and translation

Buttons triggered pipeline stages

Learning remained indirect

But the graphics pipeline is inherently dynamic—every transformation is continuous, spatial, and intuitive in the real world.

So the question became: Why are we learning 3D concepts using 2D inputs?

✋ My Contribution: Bringing Hand Gestures into the Pipeline

I worked on integrating real-time hand gesture controls using hand tracking (via MediaPipe), transforming the visualizer into an interactive system where users directly manipulate the scene.

Instead of adjusting values artificially, users interact the way humans naturally do—with their hands.

🧠 Core Idea: Natural Mapping to Graphics Transformations

Each gesture was carefully designed to map directly to fundamental pipeline transformations:

1. Pinch → Scaling

Distance between thumb and index finger controls object scale

Closer pinch → larger object

Wider gap → smaller object

👉 This mimics how scaling works in transformation matrices, but in an intuitive way.

2. Grab → Translation

Open palm maps to object position

Moving your hand moves the object in 3D space

👉 This directly reflects how objects are repositioned in world space before entering later pipeline stages.

3. Swipe → Rotation

Horizontal swipe → rotation along Y-axis

Vertical swipe → rotation along X-axis

Speed of motion controls rotation intensity

👉 This aligns with how rotation matrices transform vertex coordinates.

🔍 The Technical Challenge

The biggest challenge wasn’t detecting gestures—it was mapping camera coordinates to 3D space meaningfully.

Hand tracking gives us (x, y, z) values from the camera, but:

These are camera-relative

They don’t directly correspond to world coordinates

Noise and jitter affect stability

To solve this, I:

Implemented coordinate normalization

Applied smoothing over multiple frames

Designed gesture thresholds for stability

Translated hand landmarks into transformation parameters

This ensured that interactions felt fluid, responsive, and predictable.

⚙️ How This Fits Into the Graphics Pipeline

The integration enhances the Application Stage of the pipeline—the stage responsible for user interaction and scene updates

Instead of:

User → UI controls → Scene changes

We now have:

User → Hand gestures → Real-time transformations → Scene updates

Why This Matters

The graphics pipeline is not just about rendering—it’s about understanding transformations across spaces.

By introducing gesture control:

* Abstract math becomes physical interaction

* Learning becomes experiential

* Users develop spatial intuition instead of memorizing formulas

This is especially impactful for:

* Beginners struggling with 3D transformations

* Students visualizing coordinate systems

* AR/VR-oriented learning systems

⸻

🧩 Extending Beyond the Project

This feature is not just a UI improvement—it opens doors to:

🔹 Digital Twin Interaction

Real-world hand → virtual object mapping

Simulate real-time changes intuitively

🔹 AR/VR Learning Systems

Gesture-driven education environments

🔹 Touchless Interfaces

No hardware dependency beyond a camera

⸻

🎯 The Result

What started as a visualization tool evolved into an interactive learning system.

With hand gestures:

* The pipeline is no longer just observed

* It is experienced

And that shift—from passive viewing to active manipulation—is where real understanding begins.