The app for independent voices

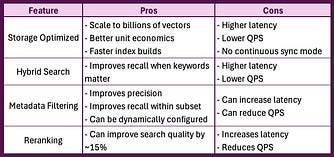

Vector Search is a great way to build a semantic layer over your unstructured data, but how do you decide what features to include in your endpoint? As shown in the matrix below, most decisions come down to a quality vs latency tradeoff.

However, if you need lower operating costs as well as improved quality, latency, and QPS, then you’ll want to consider the data going into the vector db itself. Improving your data parsing and chunking strategy, selecting and fine tuning your embedding model, and prompt optimizations can all yield improved metrics across the board in exchange for more upfront planning and investment.

Mar 2

at

5:04 PM

Relevant people

Log in or sign up

Join the most interesting and insightful discussions.