Make money doing the work you believe in

dbt Microbatch Strategy: A New Standard for Incremental Loading

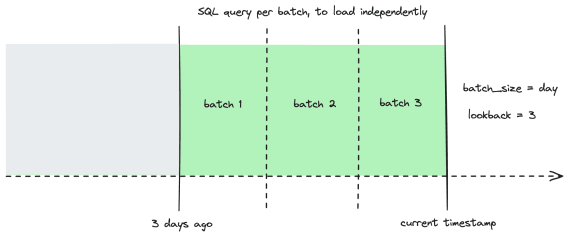

The dbt Microbatch strategy is a specialized incremental loading pattern in dbt (introduced in v1.9/v1.10) designed for high-volume, time-series data. Unlike traditional incremental strategies that process a single delta since the last run, microbatching breaks large datasets into smaller, independent time-based batches (e.g., hourly or daily). This approach allows dbt to process historical data in parallel and makes pipelines significantly more resilient to upstream data late-arrivals or schema shifts.

How to use:

Define the Strategy: Set

materialized='incremental'andincremental_strategy='microbatch'in your model's configuration block.Configure the Granularity: Specify the

batch_size(e.g., 'day', 'hour') and thelookbackperiod to define how far back dbt should check for new or updated data.Identify the Event Time: Define the

event_timecolumn in your model; dbt uses this column to automatically filter and partition the data into batches.Leverage the Filter: Inside your SQL, use the

{{ model_batch_filter() }}macro to let dbt automatically inject the correctWHEREclause for the specific time window being processed.Enable Parallelism: Configure your threads in

profiles.ymlto allow dbt to process multiple historical batches simultaneously during the initial backfill.

When to use:

High-Volume Time-Series Data: When working with massive event logs, clickstreams, or IoT data where a full refresh is computationally impossible.

Long Backfills: When you need to load months or years of historical data and want to avoid single, massive long-running transactions.

Late-Arriving Data: When upstream records often arrive with a delay, as the

lookbackfeature automatically re-processes recent windows to ensure completeness.

When not to use:

Non-Time-Series Tables: If your data doesn't have a reliable "event timestamp" or if you are tracking slowly changing dimensions (SCDs).

Small Datasets: If your table can be fully refreshed or handled by a standard

appendstrategy in seconds, the complexity of microbatching is unnecessary.Frequent Global Aggregates: If your model requires calculating metrics across the entire history (e.g.,

count(distinct user_id)since inception), as microbatching works best on independent time windows.

More on dbt microbatch strategy here: