The app for independent voices

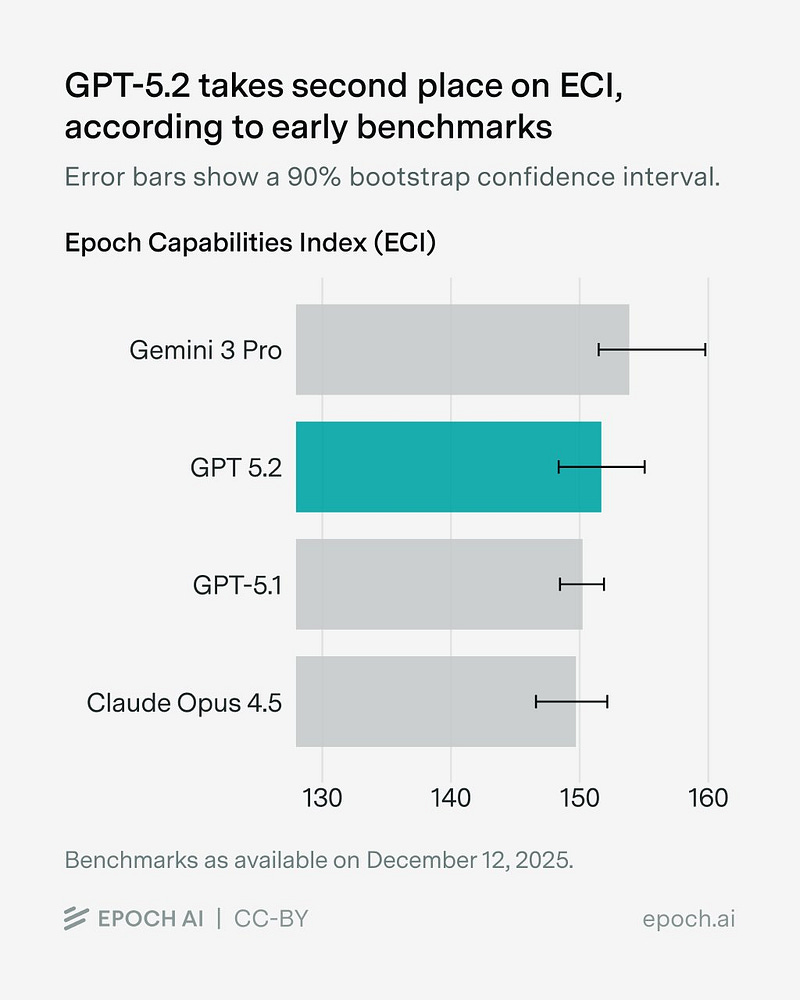

GPT-5.2 scores 152 on the Epoch Capabilities Index (ECI), our tool for aggregating benchmark scores. This puts it second only to Gemini 3 Pro.

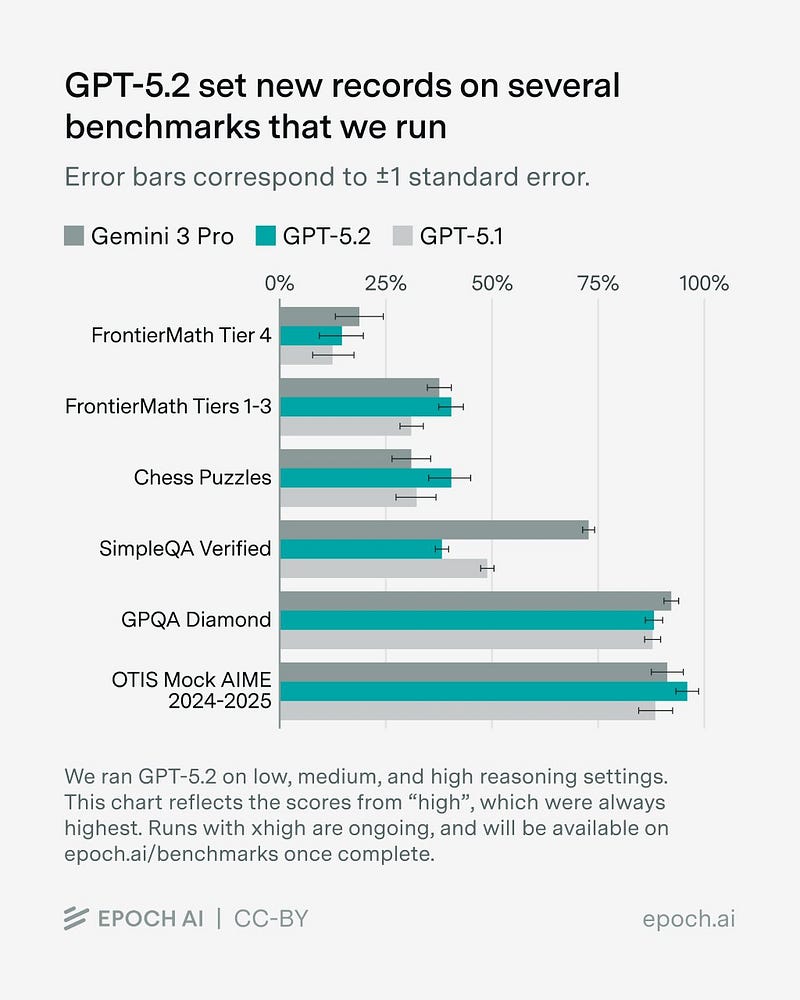

GPT-5.2 ranks first or second on most of the benchmarks we run ourselves, including a top score on FrontierMath Tiers 1–3 and our new chess puzzles benchmark. The exception is SimpleQA Verified, where it scores notably worse than even previous GPT-5 series models.

Our AIME variant, OTIS Mock AIME 2024-2025, is nearly saturated. There remains a single problem no model has solved, shown below. The diagram is given to the model in the Asymptote vector graphics language.

The ECI score for GPT-5.2 currently also includes ARC-AGI v1 and SWE-bench Verified (bash only). We’ll add new scores as they come out, including our own runs of GPT-5.2 with xhigh reasoning effort.

Check our hub for the latest!