The app for independent voices

🧠 What does “responsible AI” actually require?

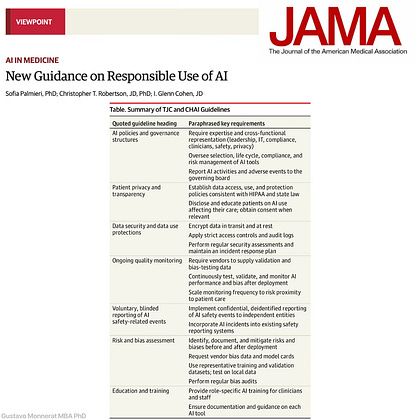

AI governance: Cross-functional leadership (clinical, IT, compliance, privacy, safety) overseeing AI selection, lifecycle management, risk, and board-level reporting.

Patient privacy & transparency: Clear data-use policies aligned with HIPAA and state law, with disclosure when AI directly affects care.

Strong data security: Encryption in transit and at rest, strict access controls, audit logs, and active incident-response planning.

Local validation & continuous quality monitoring: Do not rely on vendor claims alone. Test on local data, monitor post-deployment performance and drift, and scale oversight based on patient risk.

Bias & risk assessment: Identify, document, and mitigate bias and safety risks before and after deployment.

Safety event reporting: Integrate AI-related incidents into existing safety systems and support confidential, de-identified reporting to independent bodies.

Education & training: Role-specific AI training for clinicians and staff, with clear tool-level documentation.

👉 Where do you see the biggest gap today: local validation, bias monitoring, governance ownership, or operational capacity?