Make money doing the work you believe in

Fine-tuning is a powerful technique product teams can use to adapt LLMs to their needs. But many confuse it with RAG or RL.

What's the difference?

And why should you care as a PM?

In many situations, context engineering hits its limit:

You need a consistent style, brand voice, or complex structure

You want to accurately classify inputs

You struggle to eliminate mistakes (failure modes)

Your prompt becomes too long or too complex

There are two primary ways to adjust a model to your needs:

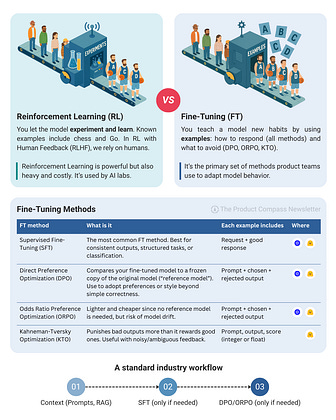

Method 1: Reinforcement Learning (RL)

You let the model experiment and learn. Famous examples include chess and Go. In RL with Human Feedback (RLHF), we rely on humans.

RL is powerful but also heavy and costly. It’s mostly used by AI labs.

Method 2: Fine Tuning

You teach a model new habits by using examples: how to respond (all methods) and what to avoid (DPO, ORPO, KTO).

It’s the primary set of methods product teams use to adapt model behavior.

Four Fine-Tuning Methods

Popular FT methods include:

Supervised Fine-Tuning (SFT): The most common FT method. Best for consistent outputs, structured tasks, or classification. Each example includes request and a good response.

Direct Preference Optimization (DPO): Compares your fine-tuned model to a frozen copy of the original model (“reference model”). Use to adopt preferences or style beyond simple correctness. Each example includes prompt, chosen, and rejected output.

Odds Ratio Preference Optimization (ORPO): Lighter and cheaper since no reference model is needed, but risk of model drift. Uses the same example format as DPO.

Kahneman-Tversky Optimization (KTO): Punishes bad outputs more than it rewards good ones. Useful with noisy/ambiguous feedback. Each example includes prompt, output, and score (int or float).

A Standard Industry Workflow

Step 1: Start with context engineering (retrieval, prompts)

Step 2: Add SFT if needed (structure, tone, consistency)

Step 3: Apply DPO/ORPO if needed (preferences, style, nuances)

Hope that helps!

—

P.S. Enjoy this?

In my new post, you will find detailed examples for each fine-tuning method. Plus, I demonstrate SFT and DPO step-by-step: productcompass.pm/p/wha…

Want to break into the top 10% of AI PMs?

I recommend the AI PM Certification by Miqdad Jaffer (Product Lead, OpenAI). I joined the 2024 cohort and loved it. It's the #1 AP PM cohort on Maven.

The next session: Oct 18, 2025.

You can secure a $550 discount here: bit.ly/aipmcohort