The app for independent voices

Everyone talks about the importance of “intent” when building AI agents. Few explain what it actually means.

Agents don’t fail because they can’t reason.

They fail because intent is underspecified.

The fix isn't adding more instructions. It's making intent explicit.

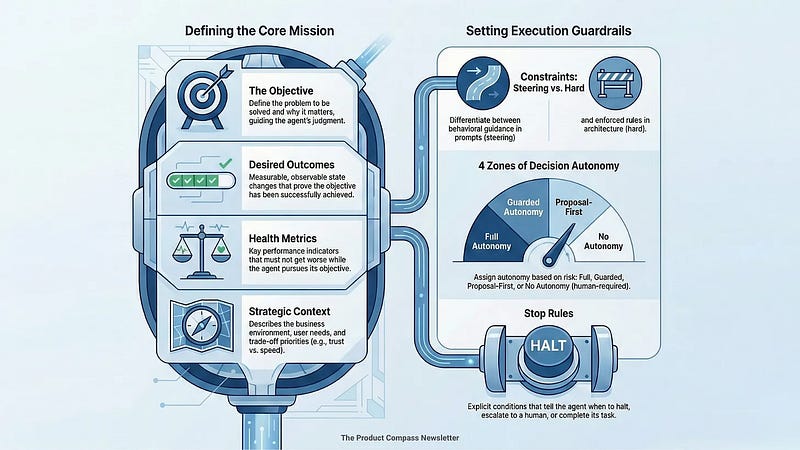

Here's the Intent Engineering Framework:

1. Objective

Define the problem and why it matters. The objective guides reasoning and trade-offs when instructions run out.

2. Desired Outcomes

Observable states that prove success (not lagging indicators). Express them from the user’s perspective, not the agent’s.

3. Health Metrics

What must not degrade while optimizing. This is how you avoid Goodhart’s Law.

4. Strategic Context

Where the agent sits in the system, strategy, business model, other agents. Not all of this needs to live in the prompt.

5. Constraints

Steering (context window): influence reasoning

Hard (architecture): enforce compliance

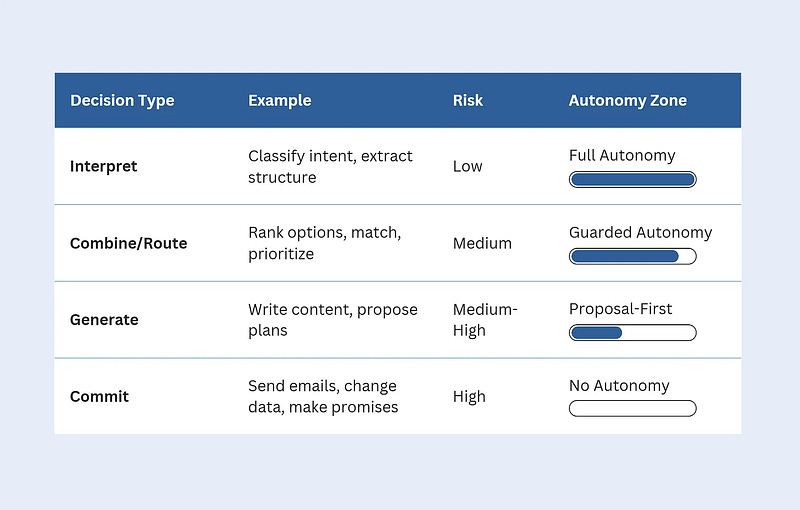

6. Decision Types & Autonomy

Autonomy is not binary:

Full → Guarded → Proposal-first → Human-required

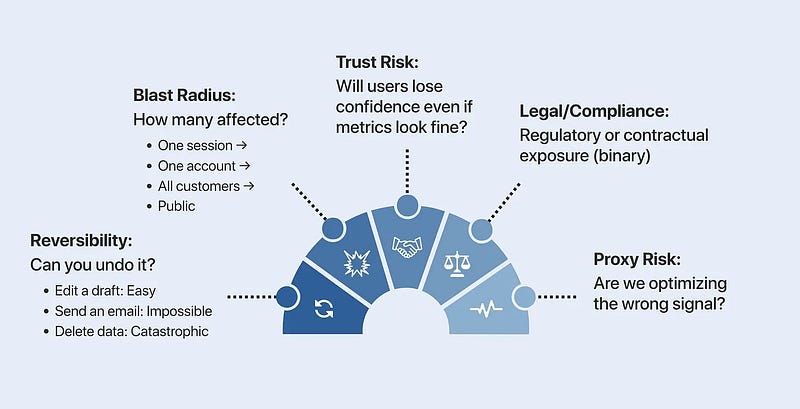

Assign it based on blast radius and reversibility.

7. Stop Rules

When to halt, escalate, or complete.

These are execution boundaries, not suggestions.

—

The reasoning layer proposes.

The orchestration layer enforces.

If a constraint matters, enforce it. If a decision is risky, gate it. If failure is expensive, define stop rules before you ship.

The models are already good enough.

What separates agents that work in production from those that fail quietly is intent, not intelligence.

Without explicit intent, your agent will optimize something you never said out loud.

—

🎁 Full framework with examples, templates, and risk lenses (no email, no paywall): productcompass.pm/p/int…

Note: Use it to ask the right questions. Not to add complexity.