The app for independent voices

Dynamic Resource Allocations - Moving Beyond AI Scaling

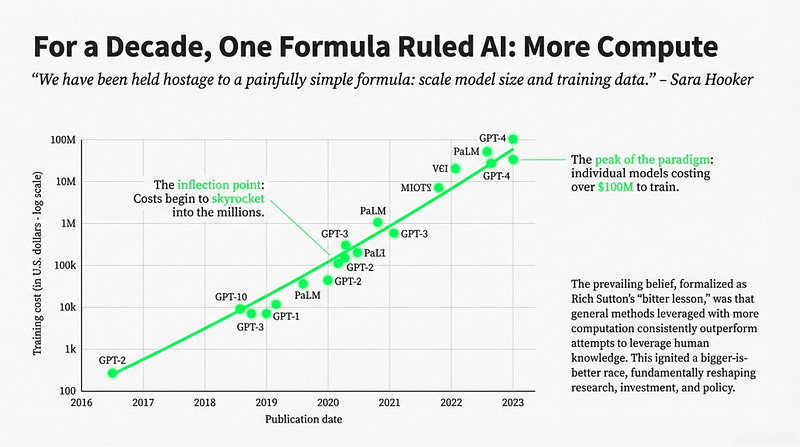

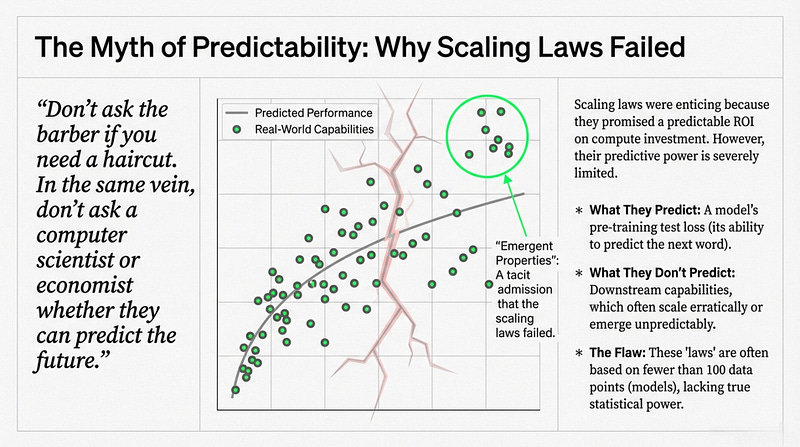

An examination of the transition away from traditional AI scaling, where progress was historically driven by simply increasing model size and training data.

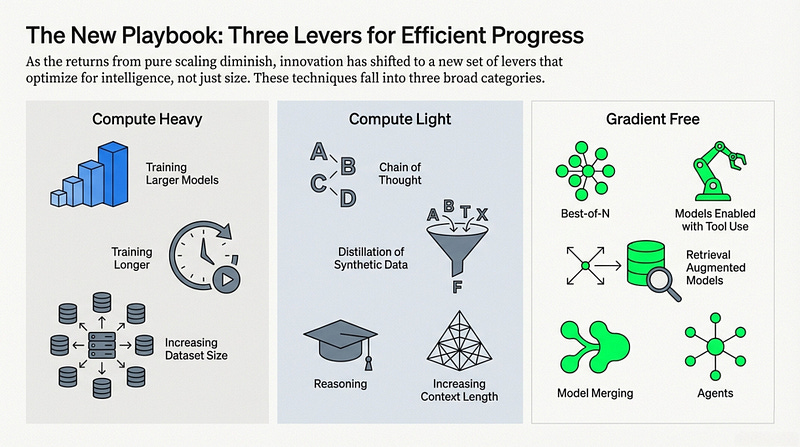

This "bigger is better" philosophy is being replaced by a focus on inference-time compute, where models are trained to "think" longer rather than just possess more parameters.

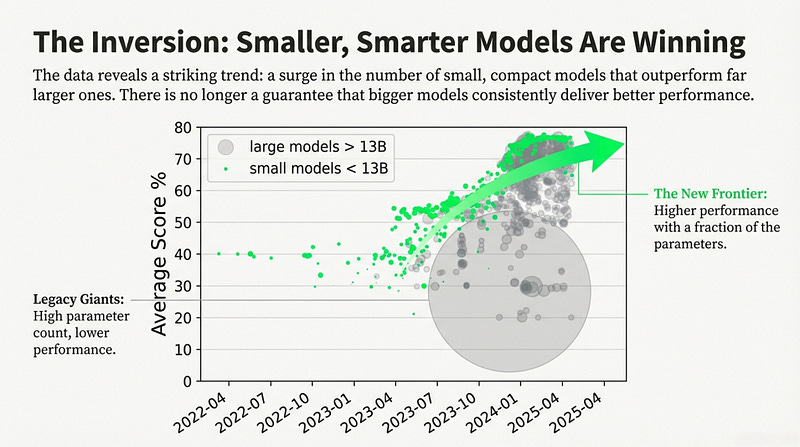

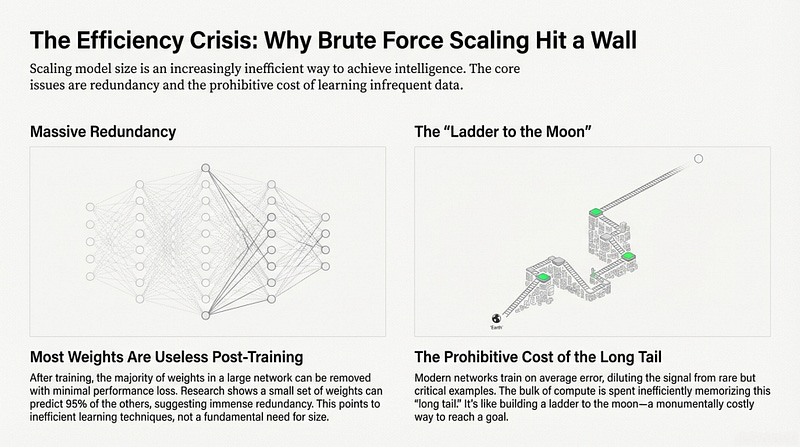

Evidence shows that smaller, optimized models frequently outperform massive legacy systems, proving that

♨️data quality and

♨️algorithmic efficiency

are more critical than raw brute force.

This shift has significant implications for research culture and accessibility, as the reliance on massive capital for compute has previously marginalized academia and concentrated power in industry labs.

Techniques like model distillation and synthetic data generation are now enabling high-level intelligence to be compressed into more efficient, run-anywhere architectures.

Ultimately, the future of AI lies in dynamic resource allocation, moving the frontier from building the largest models to developing the most effective and efficient thinkers.

Useful Read and Watch by Sara Hooker: papers.ssrn.com/sol3/pa…