The app for independent voices

Everyone’s hyped about AI agents and “intelligent” data products right now.

But a huge amount of real-world pain still comes from… boring data pipelines.

I just read a great piece from SeattleDataGuy on full refresh vs incremental pipelines and it’s a perfect reminder: this “unsexy” stuff is where data teams either print money or burn it.

A few takeaways I wish more data/analytics engineers internalized:

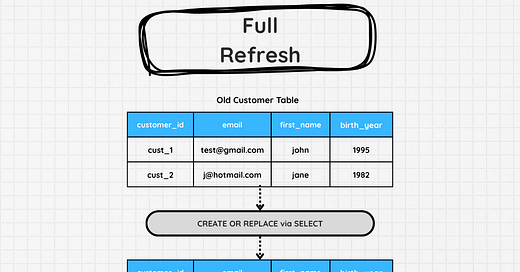

Full refresh is dumb-simple and safe(ish): rebuild the whole table, often wrapped in a WAP (write–audit–publish) flow so prod only sees validated data. Great for small tables or when you don’t trust change tracking.

But at scale, full refresh is a tax: long runtimes, tight windows, angry stakeholders, and a warehouse bill finance will eventually question.

“Incremental” is not one pattern. You’ve got ID-based appends, date-based with lookback windows, merge/upsert, even aggregation-style corrections in accounting-like domains.

The moment you go incremental, you’re forced to really understand your data: ordering, late arrivals, mutable records, corrections, and what your warehouse actually supports (MERGE, insert overwrite, partitions, etc.).

There is no “incremental is always better.” It’s a set of tradeoffs: simplicity vs cost vs latency vs correctness.

If you want to grow beyond “pipeline plumber” to trusted data engineer / analytics engineer, this is the level you need to think at: How does this table grow, change, and get corrected over time — and what does that imply for my load pattern?

Curious: in your stack today, what % of your core models are full refresh vs incremental, and is that by design or just legacy?