Make money doing the work you believe in

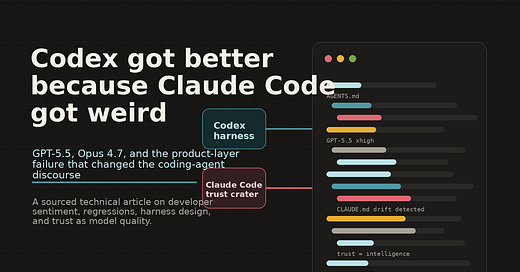

The product-layer vs model-capability framing is what most comparisons miss. I ran a Claude Code harness in production for months and the pattern matched yours - undocumented behavior changes that only surfaced in edge cases.

The model was still capable. The harness assumptions had shifted around it.

The Codex point about structured inspection before writing is interesting because that read-before-write discipline was exactly what gave Claude Code an early advantage. When it stopped being consistent, trust dropped fast. Product trust is fragile in a way that model quality is not - model regressions you can benchmark, harness drift you only notice when something quietly breaks.

May 11

at

12:10 PM

Relevant people

Log in or sign up

Join the most interesting and insightful discussions.