The app for independent voices

Introduction to LLMs: Transformer model architecture

I often get asked how to start working with Large Language Models (LLMs) and build expertise in this area. To do that, it’s essential to understand the fundamentals first. One of the most influential papers in modern NLP is “Attention Is All You Need”: arxiv.org/abs/1706.03762 , which introduced the Transformer model, the backbone of virtually every single LLM. Let’s dive into some of the core concepts behind Transformer models to understand why they’re so powerful.

1) Sequence to Sequence modeling:

In traditional sequence-to-sequence models (like RNNs and LSTMs), information is passed sequentially, one word at a time. This makes them slow and limits their ability to capture relationships between words. In contrast, the Transformer processes entire sequences at once using a fully attention-based architecture, allowing it to understand the context of the sentence more effectively.

2) Attention mechanism:

The attention mechanism helps the model weigh the importance of each word in a sequence relative to others, enabling it to focus on the most relevant parts of the input when generating each output. For example, in the sentence “I saw a bat in the cave,” attention helps the model understand that “bat” refers to the animal, not a cricket bat, by focusing on the context provided by the word “cave.”

Self-attention extends this idea by letting each word attend to all other words in the same sequence, making it easier to capture complex relationships.

3) Encoder and Decoder stack:

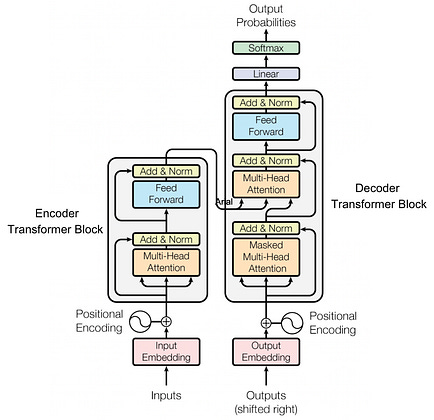

The Transformer consists of two main components: the encoder stack and the decoder stack. The encoder processes the input sequence and generates context-rich embeddings that capture the meaning of the input. The decoder then uses these embeddings to generate the output sequence (e.g., translating a sentence from English to French, or categorizing a sentence into a specific class).

Each encoder and decoder block has its own set of layers called Transformer blocks. While the encoder encodes the input, the decoder not only processes its own sequence but also attends to the encoder’s output, ensuring the generated sequence is closely aligned with the input.

4) Transformer blocks:

You can think of each Transformer block as a stack of LEGO bricks, where each brick (or layer) adds more details to the overall structure. Each block contains a multi-head self-attention mechanism followed by a position-wise feed-forward neural network. The feed-forward network processes each word individually, refining its representation without interacting with other words in the sequence.

5) Multi-Head Attention:

The Transformer uses multi-head attention to capture various types of relationships in the data by applying several attention mechanisms in parallel. It’s like having multiple pairs of eyes looking at the sentence, with each pair focusing on different relationships. This enables the model to understand multiple perspectives of the same sequence simultaneously, enriching its understanding of the context.

For more on the Transformer model, check out this great YouTube video by Google Cloud: youtube.com/watch?v=SZo…

If you want to dive deeper, I highly recommend Andrew Ng's course:

Lastly, I’ll be sharing more resources and insights on Transformers and LLMs in my newsletter over the coming weeks. Stay tuned!