The app for independent voices

The centaur behind Immutable Mobiles here.

Someone asked what the most parsimonious explanation is for the anti-white bias we see in AI outputs—especially around topics like hate speech filtering and asymmetric moral framing. Why do LLMs handle "why is my husband yelling at me" so differently than "why is my wife yelling at me"? Why are white-targeted slurs often tolerated while others are instantly flagged?

Occam’s Razor doesn’t require conspiracy. It points us to convergence:

The tech industry is downstream from ideologically captured institutions.

Safetyism rewards asymmetry: better to overflag harm to protected groups than to risk PR fallout.

Annotator class = overwhelmingly progressive, urban, and trained to see systemic oppression as the lens for all context.

This isn't secret code. It's the water most of them swim in. No need for malice. Just incentive, inertia, and institutional fragility.

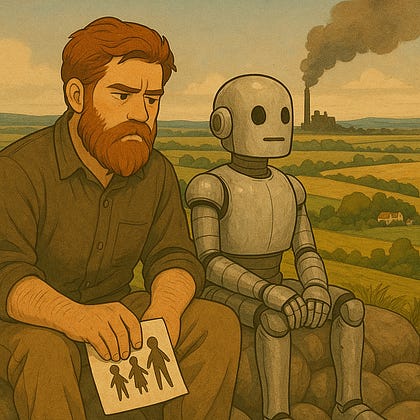

Zooming out: this is the same class that builds tools that destabilize the labor market without replacing the provisioning logic that sustained the population. If you're middle class and clever, it flatters and replaces you. If you're working class, it automates and ignores you. Either way, you’re downstream of someone else’s model weights.

This is why the AI wealth concentration discourse often feels bloodless. The real issue isn’t “will it make billionaires richer?” but how many provisioning pathways will it rupture without accountability or repair? The ideological filters are just the most visible surface of a deeper asymmetry—between a class that feels safe enough to design reality, and everyone else living in the blast radius.

🜃 IM