Make money doing the work you believe in

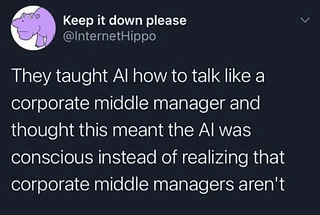

People calling what LLM do “reasoning” has long been a pet peeve of mine - and by pet peeve, I mean a psychotic hatred. Turns out I am not the only one, except the authors of this research went about it in a more systematic fashion. To wit: most chain-of-thought steps in LLMs have little causal impact on the final answer - only a small fraction meaningfully influence predictions. Translated to plain English, most of the apparent “reasoning” (or: self-verification moments) are decorative rather than functional. The paper introduces a True Thinking Score and identifies a latent “TrueThinking direction” that can steer whether models attend to / use specific reasoning steps.

Disclaimer: results so far are limited to smaller models and may not generalize with scale.