The app for independent voices

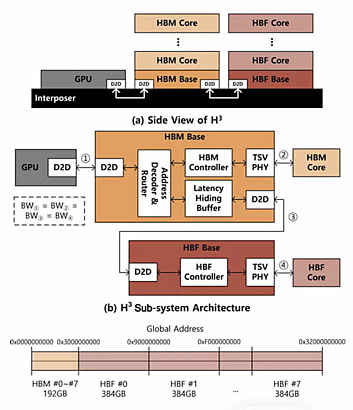

IEEE SK Hynix H³ paper combined HBM and HBF structure diagrams.

The IEEE H³ paper abstract says: "Large language model (LLM) inference requires massive memory capacity to process long sequences, posing a challenge due to the capacity limitations of high bandwidth memory (HBM). High bandwidth flash (HBF) is an emerging memory device based on NAND flash that offers HBM comparable bandwidth with much larger capacity, but suffers from disadvantages such as longer access latency, lower write endurance, and higher power consumption. This paper proposes H³ , a hybrid architecture designed to effectively utilize both HBM and HBF by leveraging their respective strengths. By storing read-only data in HBF and other data in HBM, H³ equipped systems can process more requests at once with the same number of GPUs than HBM-only systems, making H³ suitable for gigantic read-only use cases in LLM inference, particularly those employing a shared pre-computed key-value cache. Simulation results show that a GPU system with H³ achieves up to 2.69x higher throughput per power compared to a system with HBM-only. This result validates the cost-effectiveness of H³ for handling LLM inference with gigantic read-only data."

Source: blocksandfiles.com/flas…