Make money doing the work you believe in

Invert, always invert — Charlie Munger

Everyone knows memory stocks are cyclical and have burned investors before. But that's the wrong question.

The right question is whether we're in a structural shift — and the data says yes.

A state-of-the-art GPU in 2022 (the Nvidia H100) carried 80GB of high-bandwidth memory. Three years later, the 2025 B300 had 288GB. The 2027 Rubin Ultra is expected to reach 1,024GB.

So we nearly had a 13x increase in per-chip memory in five years.

Based on the current deployment trajectory, we project to deploy 25× more GPU units by 2027 than in 2022.

So, in just 5 years, the HBM memory requirement has exploded by more than 300 times (13 × 25), and only 3 companies in the world make this memory (SK Hynix, Samsung, and Micron).

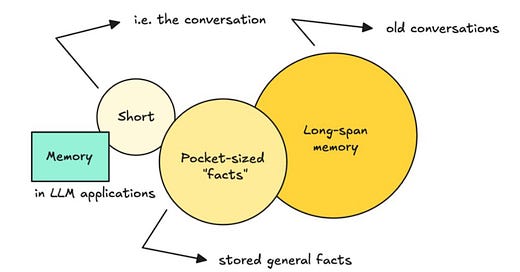

Agentic AI uses massive amounts of memory to keep everything in context, and we are still at the very beginning of agent deployment.

People forget that Nvidia was once dismissed as a cyclical chipmaker prone to regular booms and busts. AI changed that narrative permanently.

Memory may be next.