The app for independent voices

Two patterns I keep seeing in AI governance rollouts:

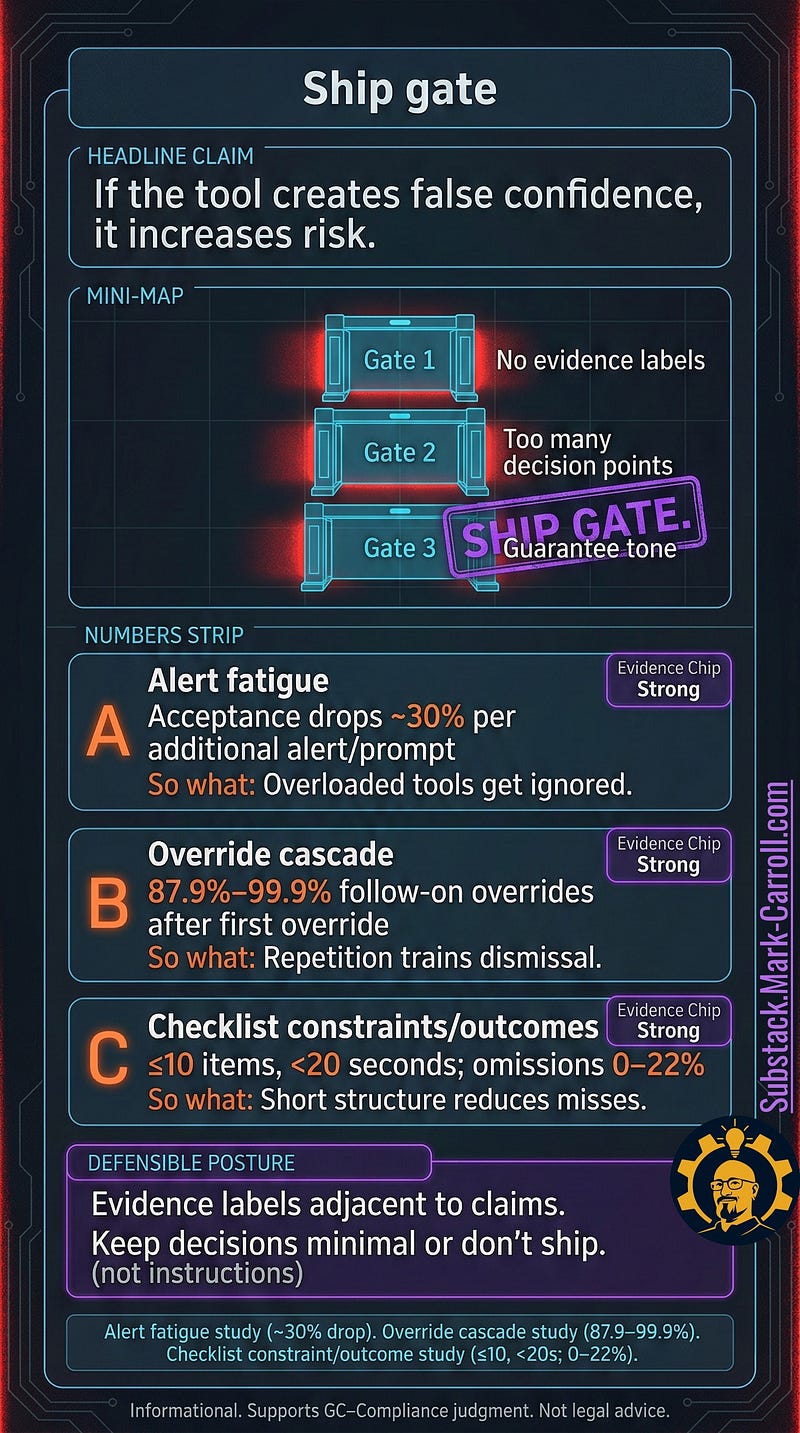

Tools ship without a ship gate.

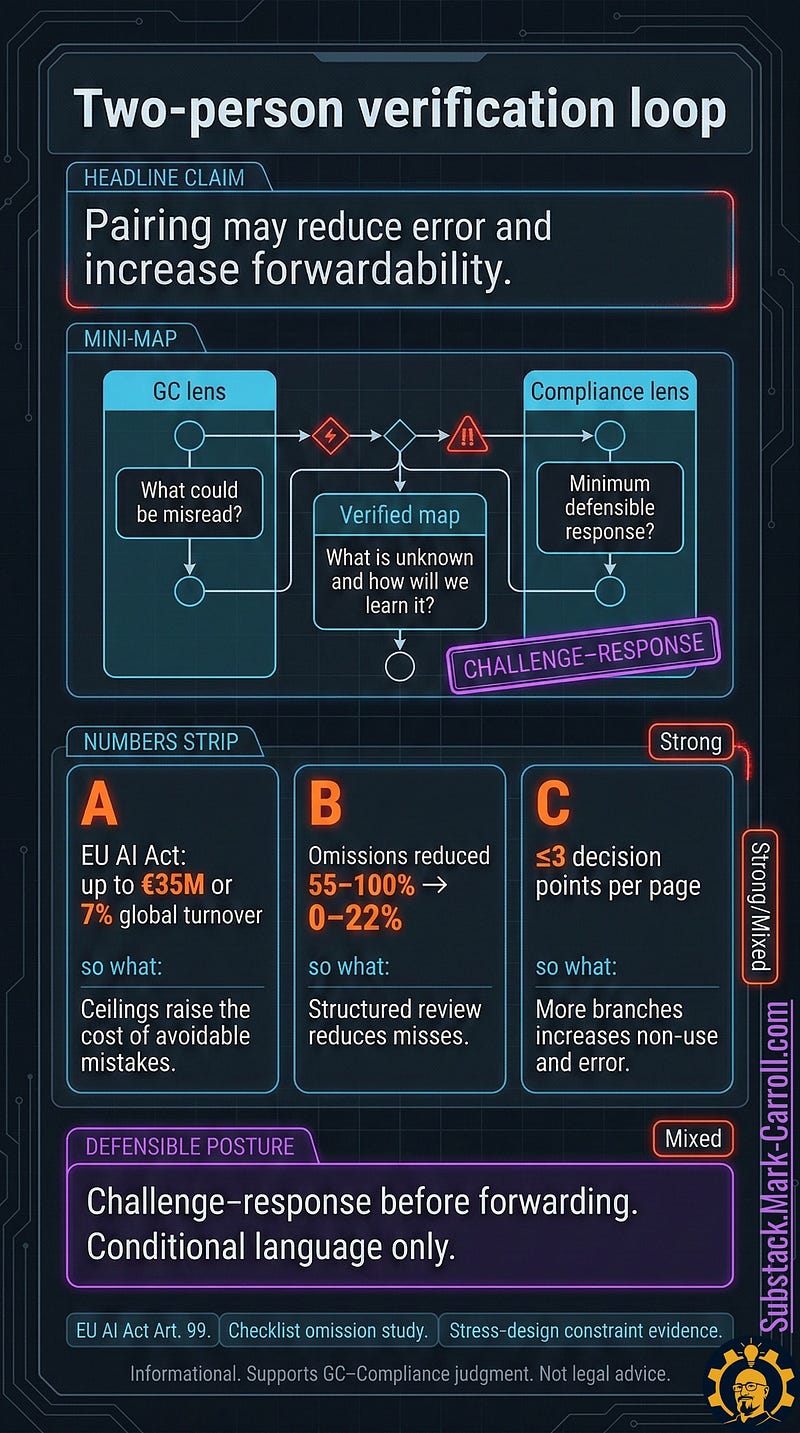

Reports get forwarded without verification.

Both create the same outcome: false confidence that increases risk.

These two panels from The Whistleblower’s Dilemma are my “last two steps before you press send”:

Ship gate: if there are no evidence labels, too many decision points, or guarantee tone, the tool is a liability.

Two-person verification: GC + Compliance in a challenge-response loop before anything gets forwarded.

Question to steal for your next review meeting:

“What would be misread, and what is unknown that we need to learn?”

Restack if your organization is still shipping governance artifacts that look safe but train people to ignore them.

Mar 7

at

11:00 PM

Relevant people

Log in or sign up

Join the most interesting and insightful discussions.