The app for independent voices

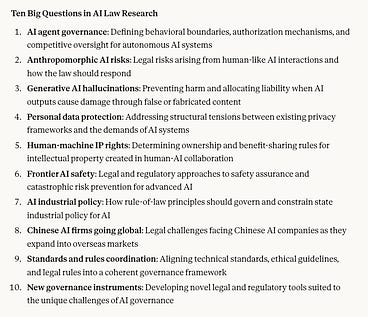

A group of Chinese legal scholars just put out the “Ten Big Questions in AI Law Research.” Below is a Claude translation (and slight condensation) of the questions. Some quick thoughts:

Agent governance — specifically, how responsibility is assigned when agents take actions that cause harm — is clearly top of mind across China’s AI policy community. Think we’ll see a preliminary attempt at regulation in the next 6 months.

Interesting that the gen AI bit focuses specifically on hallucinations and the damage they cause. Substantially different from the more explicit focus on “false information" used for political speech violations. Maybe they’re looped together, but similar to the agent piece this hallucination bit is more focused on liability for damages.

The frontier risk part specifically focuses on “extreme risk” (极端风险), a phrasing that’s been showing up more in policy discussion there.

Focus on the alignment of standards-ethical guidelines-regulations reinforces my belief that standards are going to be playing a much more impactful role in Chinese AI gov going forward. I wrote about this in a recent substack about the AI companion regulation. substack.com/@mattsheeh…

This group of scholars (all of them from 政法大学s around the country) are quite directly competing with the other group of AI policy people who are preparing to put out their Model AI Law 4.0.

People’s Daily write-up covering the launch of this list. They say the report is being/has been published but I can’t find it yet.