Make money doing the work you believe in

Ask a colleague why they refuse to use AI. They say it uses up all the water. You then send them to Andy Masley’s blog. Then it's the hallucinations. You mention accuracy has improved dramatically. Then, finally: the process is the point. The struggle. The craft. The deeply human act of sitting with uncertainty.

They're not reasoning. They're rationalizing their gut intuitions.

My amazing student Victoria Oldemburdo de Mello, with Eloise Cote, Reem Ayad, Yoel Inbar, Jason Plaks, and I have a new preprint that explores this more thoroughly, called "The Moralization of Artificial Intelligence".

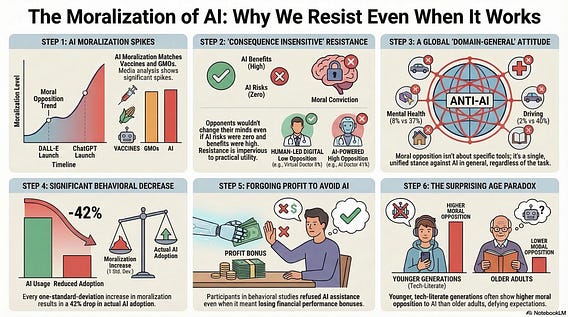

We started by asking how moralized AI has become in public discourse. Analyzing 69,890 news headlines from 2018 to 2024, we found that AI was moralized at levels comparable to GMOs and vaccines, technologies whose moral opposition has been studied for decades. It ranked above both. The sharpest spike came within weeks of ChatGPT's launch in late 2022.

When we surveyed representative samples of Americans, a majority of AI opponents said their views wouldn't change even if AI proved safe and beneficial. That's consequence insensitivity, the hallmark of moral conviction, not practical calculation. Across art, chatbots, legal tools, and romantic companions, AI moralization loaded onto a single latent factor. A global moral stance, dressed up in whatever practical language is available.

The behavioral data make this concrete: a one standard deviation increase in moralization scores predicted a 42% drop in actual AI usage, even when it would have benefited that person personally. The conviction preceded the behavior by up to 573 days.

The next time someone gives you three different reasons to oppose AI, each one dissolving under mild scrutiny, you're probably not watching someone think. You're watching someone feel.

Preprint available here: osf.io/preprints/psyarx…