Make money doing the work you believe in

Sharpe 1.06. 25 years of US equities. Trained on 300 stocks; deployed on 1,000 without retraining.

A new paper drops an end-to-end neural network that replaces classical covariance cleaning (QIS, Ledoit-Wolf, DCC) with a BiLSTM trained directly on realized portfolio variance.

The setup:

Rotation-invariant architecture: mirrors the analytical form of the GMV solution (not a black box).

Three interpretable modules: learnable lag weighting, BiLSTM eigenvalue cleaner, and inverse-volatility MLP.

Dimension-agnostic: the same trained model scales from a few hundred stocks to 1,000+.

Fully realistic backtest with IBKR-style commissions, SEC fees, slippage, and financing costs.

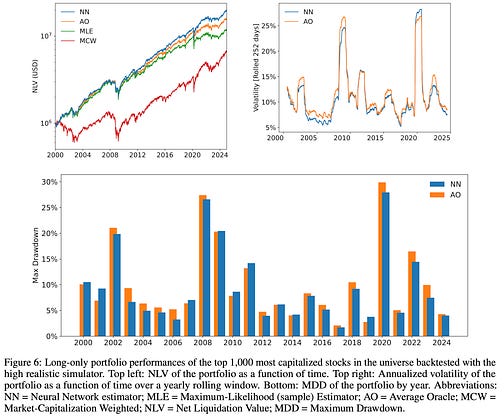

The results (long-only, top 1,000 US stocks, 2000–2024):

Sharpe 1.06 vs 0.94 (Average Oracle), 0.93 (DCC), 0.85 (QIS).

Lowest realized variance, lowest VaR, lowest CVaR.

Performance advantage is stronger post-2012, not just a pre-AI-era artifact.

Bonus finding: the learned lag weights follow a power law, not the classical exponential decay used by RiskMetrics/EWMA. Rethinking the default decay kernel alone might be worth the read.