The app for independent voices

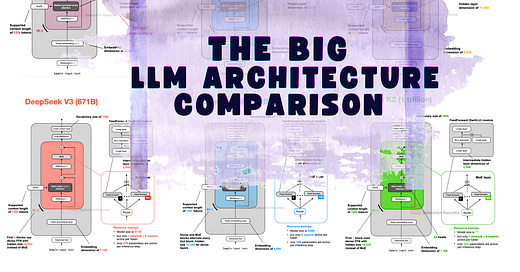

From GPT to MoE: I reviewed & compared the main LLMs of 2025 in terms of their architectural design from DeepSeek-V3 to Kimi 2. & Qwen3.

Multi-head Latent Attention, sliding window attention, new Post- & Pre-Norm placements, NoPE, shared-expert MoEs, and more...

Jul 23

at

2:30 PM

Relevant people

Log in or sign up

Join the most interesting and insightful discussions.