The app for independent voices

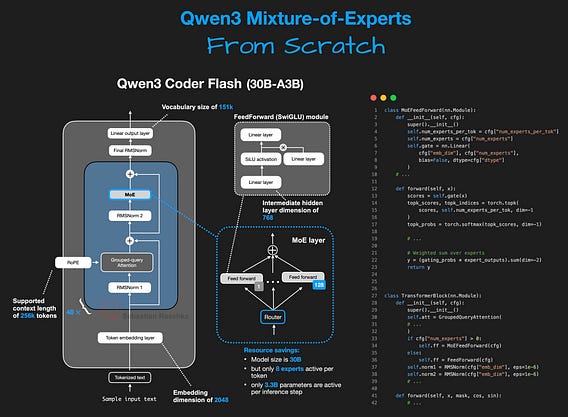

I (finally) implemented Qwen3 Mixture-of-Experts from Scratch in pure PyTorch.

The Qwen3 model suite recently added new open-weight models (Instruct, Thinking, and Coder), and I couldn't resist...

As I mentioned a few weeks ago, I've been using the older (and smaller) Qwen3 dense models as a starting point for experimentation and research due to their really good performance.

After last week's release, I finally sat down and coded the MoE variants as well.

The purpose of this re-implementation is to have human-readable code to better understand the internals and make it easier to adapt or modify for downstream tasks.

If you are curious, you can check out the notebook here: github.com/rasbt/LLMs-f…

tldr:

- Qwen3 Coder Flash (30B-A3B) architecture

- MoE setup with 128 experts, 8 active per token

- Pure PyTorch in a standalone Jupyter notebook

- Roughly 60 GB memory requirement (runs on a single A100 or newer)

Happy tinkering!