The app for independent voices

A small follow-up to my DGX Spark post. Courtesy of NVIDIA, I got to try the DGX on my workflows (coding LLMs from scratch in pure PyTorch) and wanted to share my first impressions after using it for a week.

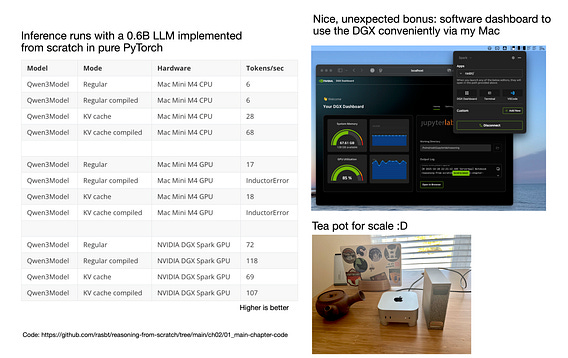

Before getting to the performance, there was a neat bonus I didn't expect: It comes with NVIDIA Sync software that lets you conveniently connect (I fully expected I would have to find my SSH tunneling notes from back when I set up Jupyter Lab, etc, on a remote machine). The setup is a breeze and a delight.

Now, how does it fare against my Mac Mini? I included the tokens/sec inference speed for a small 0.6B model I am currently working on. The DGX is much faster than the Mac Mini M4 CPU and still noticeably faster than the M4 GPU (via PyTorch MPS). More importantly, though, as I mentioned before, it is a CUDA device and thus much better supported in PyTorch. This, in turn, results in more stable training and higher benchmark accuracy. (And no compile errors, yay!)

Both devices get hot under my workloads (e.g., a constant-load run like evaluating a model with batched mode on MATH-500; or fine-tuning a model), but I feel like the DGX Spark is (probably) made with that in mind. Plus, due to its larger 128 GB RAM, I can run larger batch sizes. Then there's also the aspect that when I have the DGX (vs the Mac Mini) running computations, it keeps my Mini free for other tasks :).

Overall, a neat little package and CUDA prototyping machine that I can keep on my desk. It's super quiet similar to the Mac Mini. Of course, it's not as capable as a 6x more expensive H100 for training, but hey, you don't need a server room for that and can keep it in your office without worrying about heat or noise (this was not possible with the Lambda workstation I had a few years ago).

tl;dr:

So, I've been seeing lots of others using it for LLM inference (Ollama, vLLM, etc) but my first-week impression is that this is also a neat box for local dev and prototyping (e.g., coding and running PyTorch models) thanks to the CUDA support, which comes in handy before starting larger, more expensive training runs.

PS: Plus also find another benchmark versus the H100 in the comments below.

Will run more experiments over time. In the meantime, let me know if you have any questions.

Saw that DGX Spark vs Mac Mini M4 Pro benchmark plot making the rounds (via LMSYS, lmsys.org/blog/2025-10-…).

Thought I’d share a few notes as someone who actually uses a Mac Mini M4 Pro and has been tempted by the DGX Spark.

First of all, I really like the Mac Mini. It’s probably the best computer I’ve ever owned. For local inference with…