The app for independent voices

Before getting into the recent stack of interesting research that dropped in Jan, I wanted to briefly highlight one more interesting paper from Nov 2025...

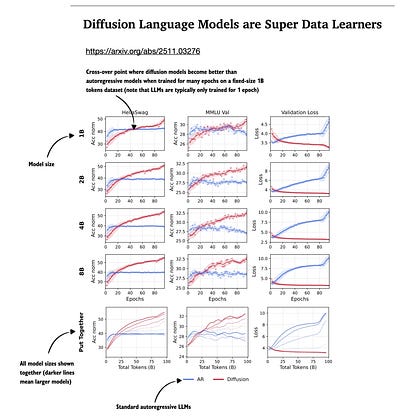

"Diffusion Language Models are Super Data Learners" (arxiv.org/abs/2511.03276)

Something I've been asked a lot in recent weeks is whether we see alternatives to the transformer architecture in 2026. For now, the transformer is here to stay for state-of-the-art modeling performance.

But yes, towards the end of the year, we saw a stronger focus on making it more efficient. This is, of course, not a new idea, but recent releases from flagship labs (Qwen3-Next, Kimi Linear, DeepSeek V3.2 with sparse attention, which I covered in my Big LLM Architecture Comparison article) show that there's now a stronger focus on it.

Anyways, what about text diffusion models? I wrote a bit more about it in my Beyond Standard LLMs article a while back. In a nutshell, I think we will see more of that in 2026, and Google will probably launch Gemini Diffusion as an alternative to their cheaper Flash models. (The advantage but also downside of text diffusion is that it generates tokens in parallel, which means that they can't natively incorporate tool calls as part of their response chain.)

Text diffusion models are known to be more efficient (although recent research also suggests that if you crank up the number of denoising steps to match the performance of autoregressive models, i.e., our standard LLMs, then you end up with the same compute budget.)

Where do I want to go with this? Now, there is one paper showing that text diffusion models can perform very favorably compared to standard autoregressive (AR) LLMs. No one does that right now due to costs, but if we were to train for multiple epochs, diffusion models seem to improve (based on the validation loss, likely due to reduced overfitting).

Interesting results!