Make money doing the work you believe in

DeepSeek just dropped a multimodal paper “Thinking with Visual Primitives” right before the Labor Day holiday (again!!)

So the problem they tried to solve is what they called reference gap: Most multimodal models can “see” images but struggle to “think clearly” about them.

Their key idea is to add point coordinates and bounding boxes to the reasoning process. The model pins every visual object it mentions to physical image coordinates while it’s reasoning, like using a finger to track objects while counting.

The language backbone is DeepSeek-V4-Flash (284B parameters with 13B active parameters) paired with DeepSeek’s own ViT.

For a 756×756 image: ViT comes up with eventually 81 final visual KV entries, compressed by 7,056 times.

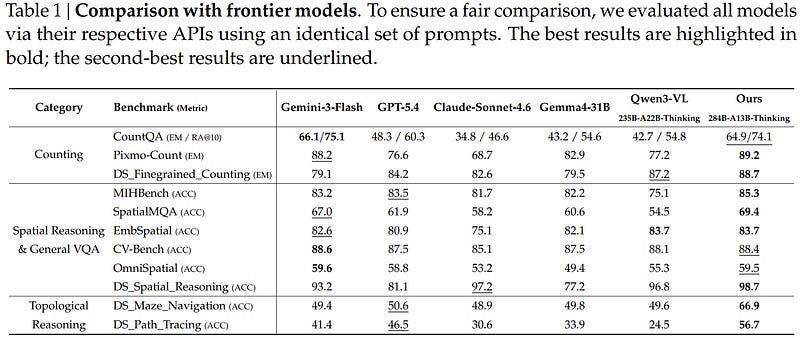

On benchmarks, it outperforms GPT5.4 and Claude Sonnet 4.6 on counting, spatial reasoning and topological reasoning.

Here is the paper link (404 now): github.com/deepseek-ai/…